Modern artificial intelligence models, despite their brilliance, suffer from a fundamental efficiency flaw. The narrator compares current systems like ChatGPT or Gemini to a Michelin-star chef who plants peanuts from scratch every time a customer orders a simple sandwich.

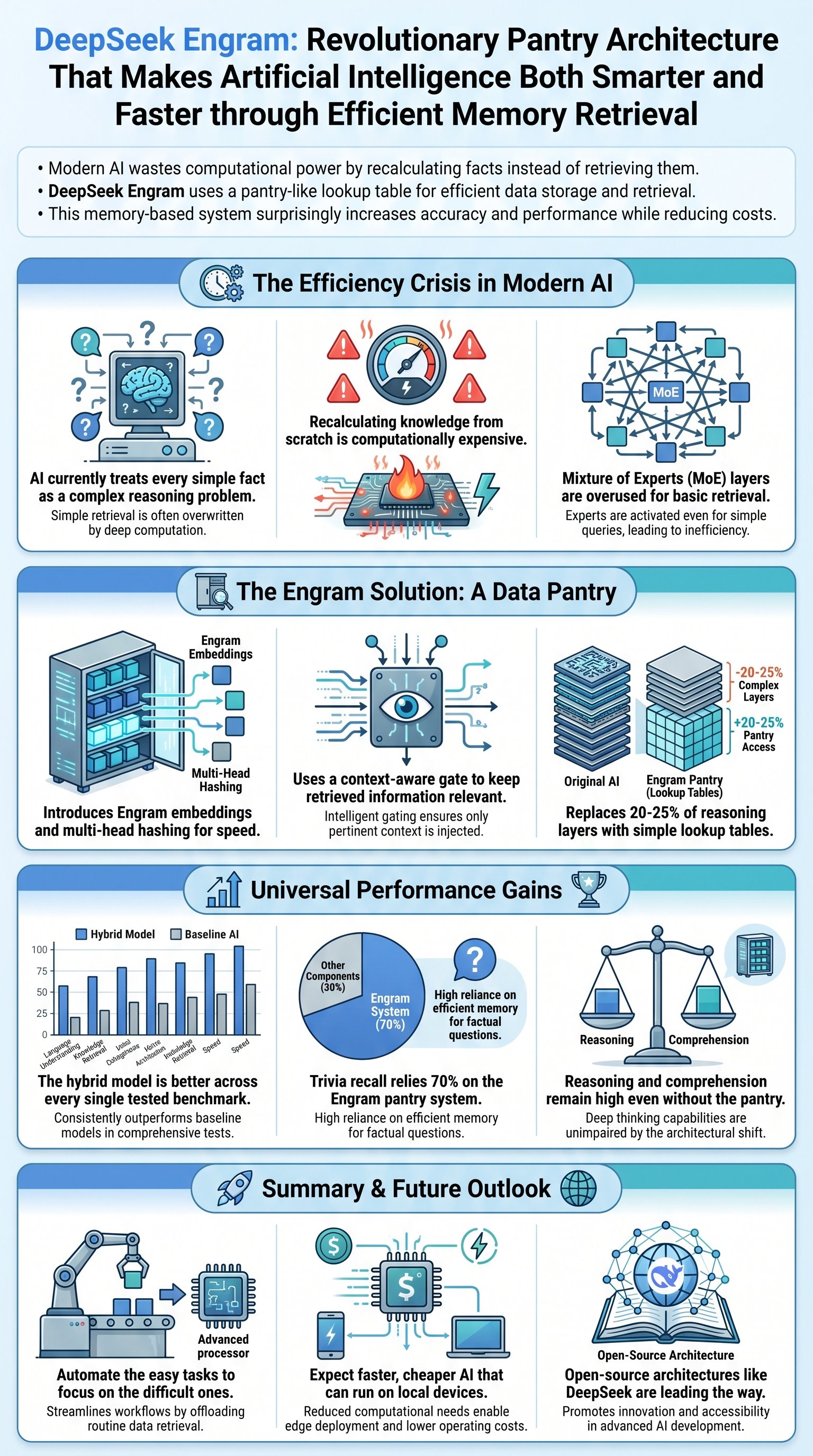

This recalculating from scratch behavior forces the AI to use complex reasoning layers for basic factual recall. When asked who Alexander the Great was, the model performs dense mathematical calculations rather than simply looking up the answer.

DeepSeek AI (DeepSeek) has introduced a potential solution to this massive waste of compute power. Their new research paper presents a technology called Engram, which functions as a pantry for the AI chef.

By providing a dedicated space for ingredient retrieval, the model no longer needs to grow the peanuts for every request. It can simply grab the pre-stored factual ingredients it needs, saving immense amounts of energy and time.

Interestingly, replacing 20% to 25% of the model's complex reasoning parts—specifically the Mixture of Experts (MoE) layers—with this lookup mechanism makes the AI smarter. The loss curves show significant improvements in accuracy.

To prevent the AI from retrieving irrelevant information, DeepSeek (DeepSeek) implemented a context-aware gating mechanism. This ensures that the retrieved data matches the specific requirements of the current task.

If the retrieved memory does not align with the context, the gate drops to zero. This effectively filters out rotting fish or incorrect data before it can ruin the final output of the reasoning process.

Benchmarks for the Engram (Engram) technique show improvements across the board. Unlike many research papers where a new method is better at some things but worse at others, this technique is measurably superior in every category.

This breakthrough suggests a shift in how we build neural networks. By automating the easy parts like factual recall, the AI can focus its heavy-duty reasoning capabilities on truly difficult logical tasks.

Research revealed that when the Engram (Engram) module is disabled during testing, the AI's ability to answer trivia drops by 70%. However, its reading comprehension remains at 93%, showing a clear functional split in the brain.

This division of labor allows the AI to use its traditional layers strictly for logic and understanding, while the pantry handles memorization. It is a more specialized and efficient architecture than previous monolithic designs.

The implications for the future are profound. This technology could lead to AI systems that are much cheaper to run and small enough to operate locally on personal devices without expensive subscriptions.

There are still limitations, such as the placement of the Engram (Engram) module. If placed too deep in the network, the model has already wasted too much compute before checking the pantry, making the lookup redundant.

The paper is released for free, providing a transparent alternative to proprietary systems. This move by DeepSeek (DeepSeek) democratizes high-performance AI architecture for the global research community.

Ultimately, Engram (Engram) proves that sometimes the most effective way to improve complex intelligence is to integrate simple, proven concepts like lookup tables. It is a milestone in the journey toward faster, more accessible AI.