The Thermodynamic Blueprint: Understanding Energy's True Nature

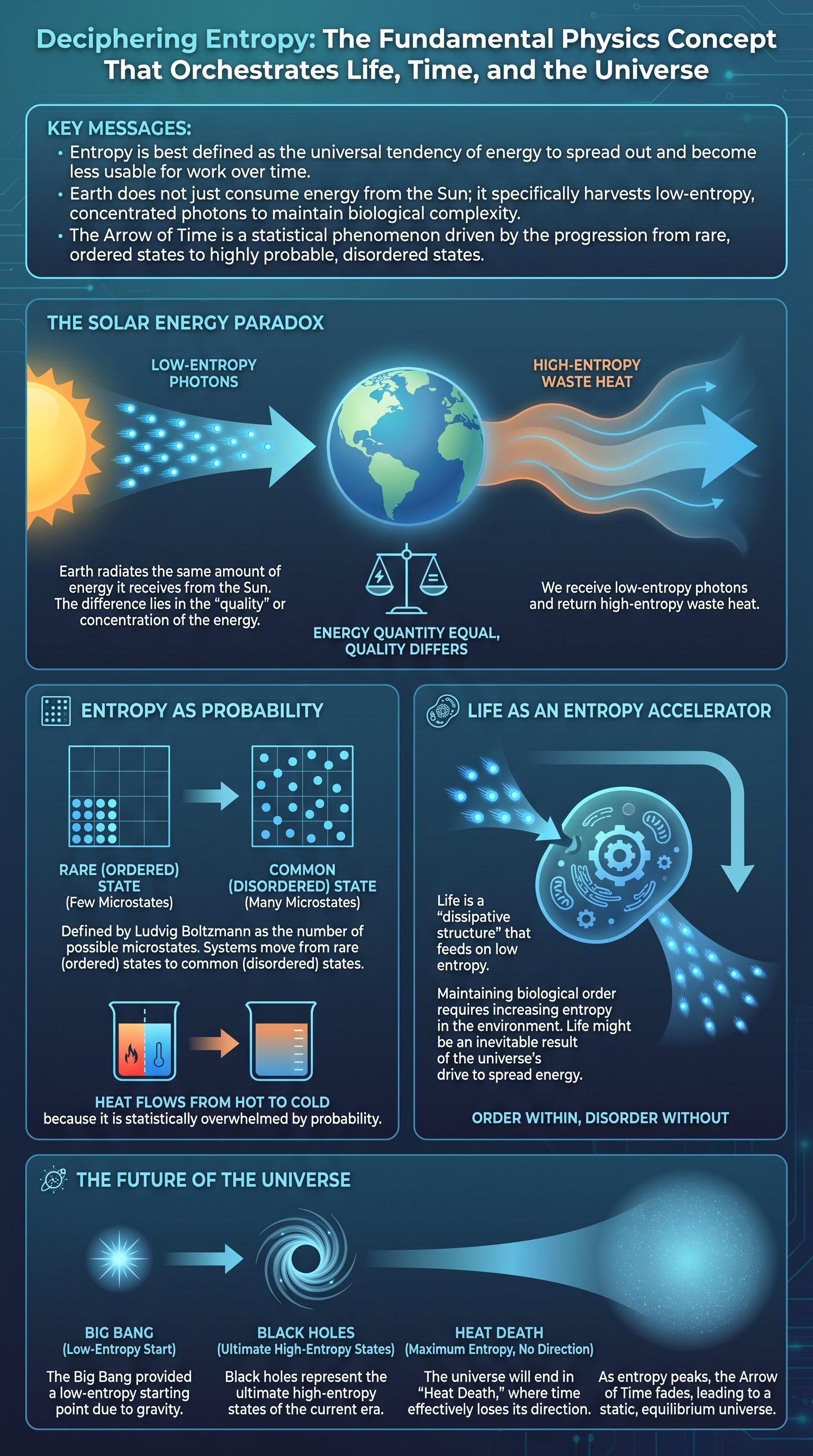

To grasp the concept of entropy, one must first dismantle a common misconception about the Earth's relationship with the Sun. While most people believe the Earth simply receives 'energy' from our star, the reality is more nuanced. The Earth is in a state of thermal equilibrium, meaning it radiates almost exactly the same amount of energy back into space as it receives. If it did not, the planet would rapidly overheat. The critical takeaway is that the energy arriving from the Sun is concentrated and high-quality, whereas the energy leaving the Earth is spread out and low-quality. This transformation is the core of thermodynamics.

The history of this discovery dates back to 1824 with Sadi Carnot, a young French engineer. Carnot sought to improve the efficiency of steam engines to help France regain industrial dominance. He realized that an ideal engine's efficiency depends entirely on the temperature difference between its hot and cold reservoirs. Even in a frictionless, 'perfect' system, an engine cannot convert 100% of heat into work because some heat must always be discarded into the cold reservoir to reset the cycle. This 'waste' energy represents the initial inkling of what we now call entropy.

Key insight: Efficiency is fundamentally limited by the temperatures of the heat source and the heat sink; 100% efficiency would require a source at infinite temperature or a sink at absolute zero.

Rudolph Clausius later formalized these observations, introducing the term 'entropy' to describe energy that has become so spread out it is no longer available to do work. He famously summarized the first two laws of thermodynamics: the energy of the universe is constant, but the entropy of the universe tends toward a maximum. This means that while energy cannot be created or destroyed, it is constantly moving from a state of concentration to a state of dispersion.

| Process Type | Energy State | Usability | Entropy Level |

|---|---|---|---|

| Reversible | Concentrated | High | Low |

| Irreversible | Dispersed | Low | High |

Energy is most useful when it is bundled or clumped together. Think of a pressurized steam tank versus the ambient air in a room. While the room's air contains massive amounts of kinetic energy from trillions of moving molecules, that energy is too spread out to be harnessed. Entropy provides the mathematical framework to measure this dispersion. It is the reason why perpetual motion machines are impossible; every action in the universe inevitably 'pays a tax' in the form of increased entropy.

The core principle of the universe is not just the conservation of energy, but the inevitable degradation of its quality.

Statistical Probability: Why Systems Move Toward Equilibrium

Decades after Carnot and Clausius, Ludvig Boltzmann provided a deeper, microscopic explanation for entropy through the lens of statistics. He realized that entropy is not a mysterious force, but a matter of probability. In any large system, there are countless ways to arrange particles—known as microstates—that result in the same overall appearance, or macrostate. A highly ordered state, like a solved Rubik's Cube, has only one possible configuration. Conversely, a 'messed up' cube has quintillions of configurations that all look equally disordered.

Consider two metal bars, one hot and one cold. When they touch, energy packets (quanta) hop randomly between atoms. While it is technically possible for all energy packets to move into the hot bar, making it even hotter, it is mathematically improbable. There are simply far more configurations where the energy is shared equally between the two bars. As the number of atoms increases—reaching trillions of trillions in everyday objects—the probability of energy concentrating spontaneously becomes so low that it effectively never happens.

- 1Energy packets move randomly through atomic lattices.

- 2Disordered macrostates have vastly more microstates than ordered ones.

- 3Systems naturally drift toward the most probable state: equilibrium.

Note: Entropy is often called 'disorder,' but it is more accurately the 'number of ways a system can be arranged while looking the same.'

This statistical approach explains why an egg never un-breaks and why heat never flows from cold to hot. It isn't that the laws of motion prevent these events; it's that the odds are so astronomically stacked against them that they would not occur within the lifetime of the universe. This transition from 'unlikely' to 'likely' states is what gives our universe its directionality.

Caution: Do not confuse 'low entropy' with 'high complexity.' Both extreme order and extreme disorder can be simple; complexity often thrives in the transitional middle ground.

Boltzmann's insight connected the microscopic world of atoms to the macroscopic laws of thermodynamics. It transformed entropy from a vague concept of 'waste' into a precise count of possibilities. The more ways a system can be arranged without changing its outward appearance, the higher its entropy. This is why a gas expands to fill a container: there are more ways for the molecules to be spread out than clumped in a corner.

The Biological Engine: How Life Thrives on Low Entropy

If the second law of thermodynamics dictates that everything moves toward disorder, how can complex life exist? The answer lies in the fact that the Earth is not a closed system. We are constantly bathed in a stream of low-entropy energy from the Sun. Life is a 'dissipative structure'—a system that maintains its internal order by accelerating the increase of entropy in its surroundings. We consume concentrated energy (sunlight or food) and release it as dispersed heat.