Breaking the Chains of Proprietary Cloud AI

The era of digital serfdom is reaching its inevitable conclusion. For years, we have been told that high-level intelligence requires a monthly subscription and a constant tether to the cloud. Proprietary AI is a fragile ecosystem built on the shifting sands of corporate goodwill.

We have already seen the consequences of this dependency. Users of major cloud AI services frequently report sudden loss of access due to heavy workloads or arbitrary policy changes. In fact, you can pay a fixed rate and still find yourself locked out of your own workflow when the provider decides their servers are too hot.

Owning your AI means running it on your own hardware for free, forever, without asking for permission.

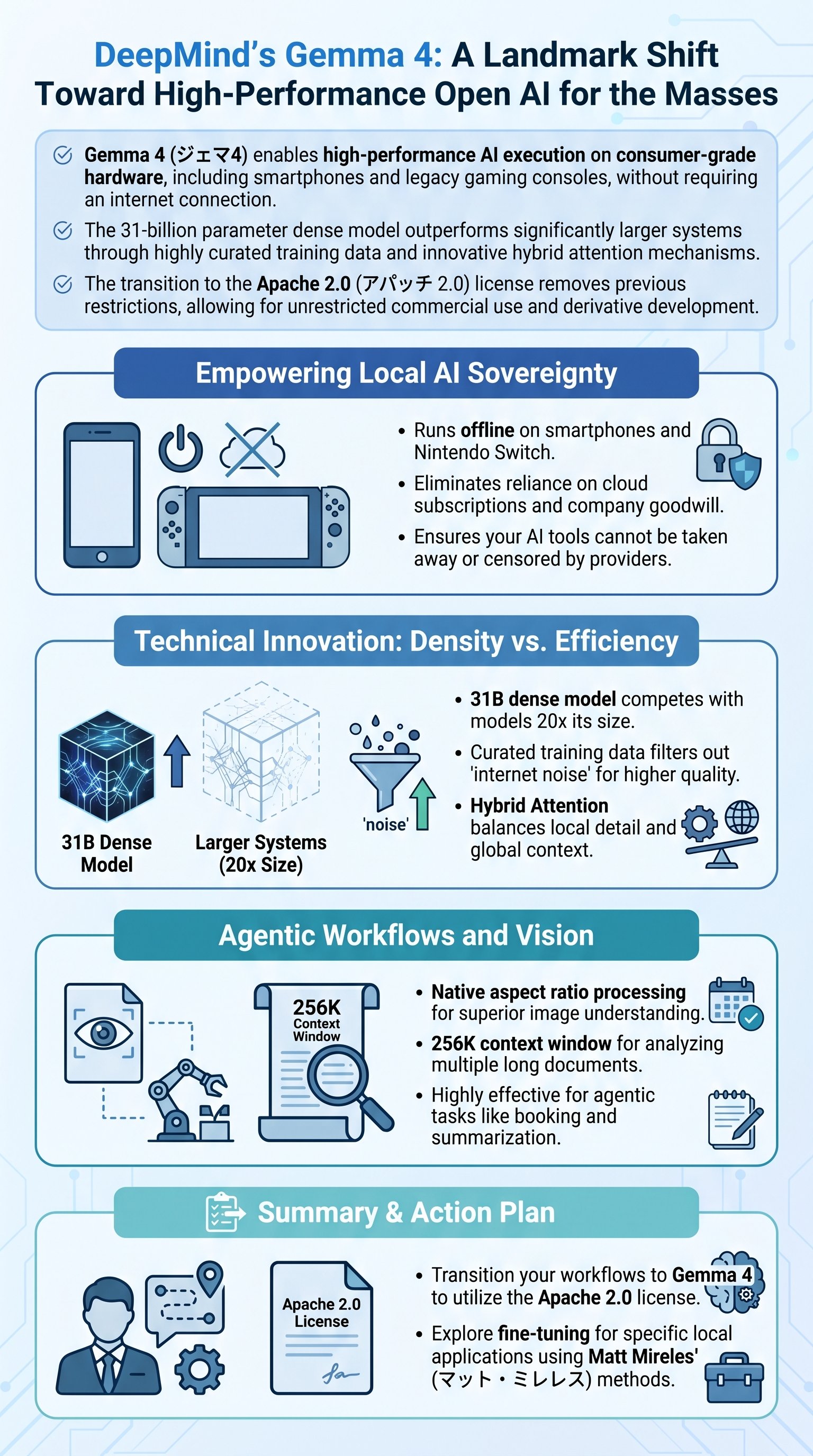

This is the primary reason why local execution is the only sustainable path forward. Gemma 4 is the definitive answer to the volatility of the cloud-first model. It represents a paradigm shift where the user regains total sovereignty over their computational tools.

Therefore, we must stop viewing AI as a service and start seeing it as a personal asset. Local models provide total privacy and guaranteed uptime that no subscription can ever match. It is the only way to ensure your intellectual property remains entirely within your control.

This is the moment where the power balance shifts back to the individual developer.

Google DeepMind has effectively handed a master key to the global community. By releasing a model that does not require an internet connection, they have democratized frontier-level intelligence for everyone. This is not just a technical update; it is a declaration of independence for the "little man".

The Local AI Checklist

Intelligence That Fits in Your Pocket

The most impressive feat of Gemma 4 is its radical efficiency. While competitors like Nvidia's Neotron 3 Super demand gargantuan hardware configurations, Gemma 4 thrives on what many would call "potato" hardware. It is designed to be lean, fast, and surprisingly powerful.

In fact, the smallest iterations of the model require only a few gigabytes of memory. This allows it to run on a first-generation Nintendo Switch, a device notoriously limited in processing power. If a beat-up gaming console can handle 2 billion parameters, the potential for mobile integration is limitless.

| Device Type | Execution Capability | Connectivity |

|---|---|---|

| Smartphone | Full Local Execution | Offline |

| Legacy Console | 2B Parameter Model | Offline |

| Web Browser | Real-time Classification | Local |

But do not let the small footprint fool you. The 31-billion parameter dense model is a monumental disruptor in the open-weights space. It has been recorded beating models that are ten times its size in standard benchmarks. This level of optimization was previously thought to be impossible for dense architectures.

Key Performance Goal: Achieve frontier-level reasoning on consumer-grade hardware without specialized enterprise GPUs.

Furthermore, we are seeing real-world applications pop up within days of release. Developers are already deploying offline translation and summarization apps that run entirely on mobile handsets. This proves that you do not need a multi-million dollar data center to create meaningful value.

The era of needing a $2,000 GPU just to experiment with AI is officially over.

Indeed, the 31B model remains competitive with systems containing hundreds of billions of parameters. This efficiency is not an accident; it is the result of a ruthless focus on architecture over raw scale. It challenges the "bigger is always better" dogma that has dominated the industry for far too long.

The Architectural Secrets of Gemma 4

How did Google manage to pack so much power into such a compact frame? The answer lies in four critical architectural innovations that separate Gemma 4 from the mediocre masses. They have moved away from the "dump the internet" approach to training.