The Dawn of the o3 Era and the End of the AI Wall

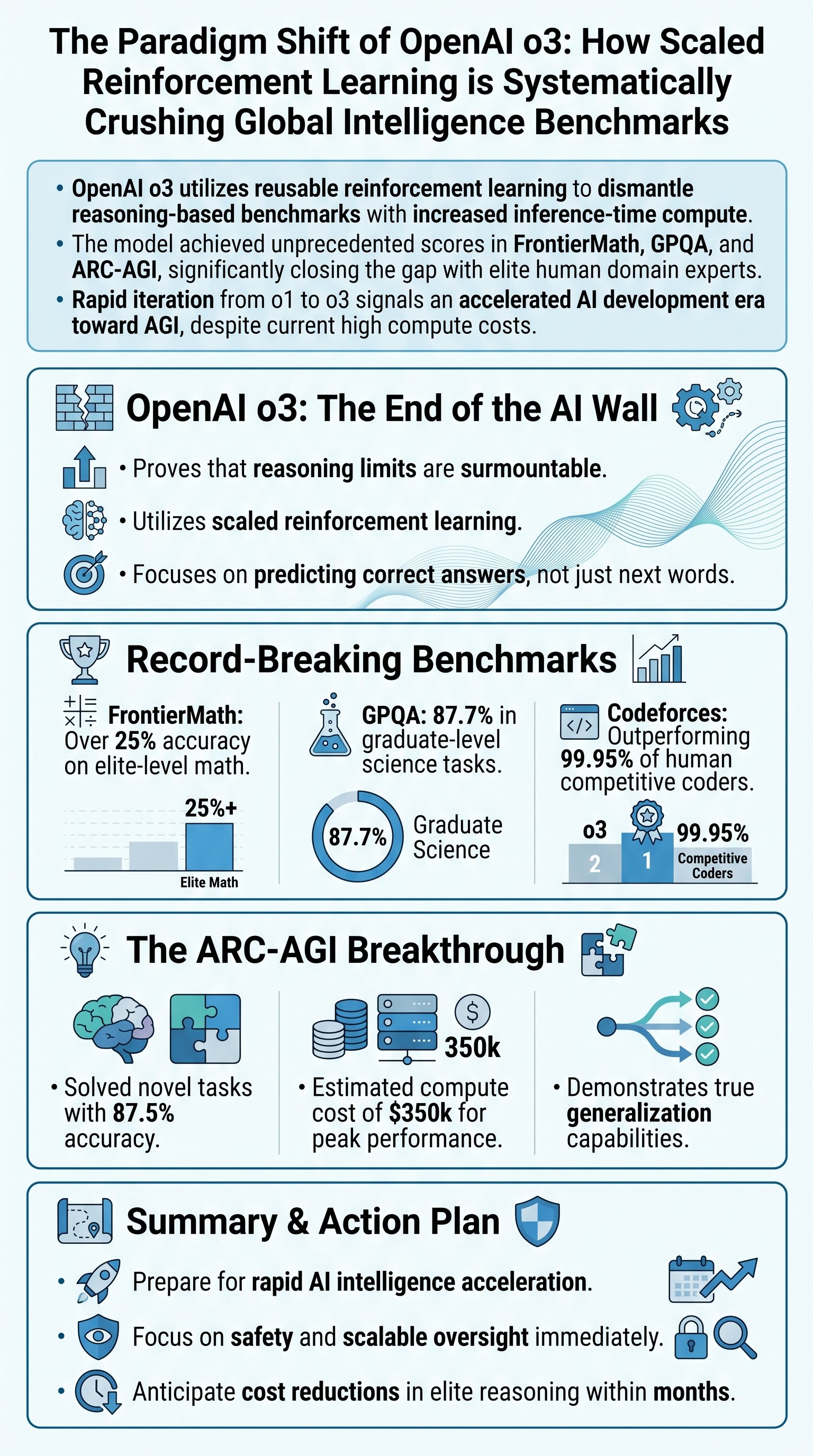

The recent announcement of o3 by OpenAI serves as a definitive rebuttal to the narrative that artificial intelligence development has hit a performance ceiling. Rather than merely improving upon previous iterations, o3 demonstrates a reusable technique that allows AI to surmount almost any challenge susceptible to logical reasoning. The core of this advancement lies not in a secret ingredient, but in the massive scaling of reinforcement learning beyond what was seen in the o1 series. This methodology shifts the AI paradigm from simple next-token prediction to a system that predicts a series of tokens leading to an objectively correct answer through internal verification.

OpenAI has effectively proven that if a challenge is structured around reasoning and has representative steps in the training data, the o-series models will eventually solve it. This is a monumental shift for the industry, as it suggests that the perceived 'wall' of AI scaling was actually a limitation of our previous training methods rather than a fundamental barrier. By getting the base model to generate thousands of candidate solutions and using a verifier model to rank them, OpenAI has created a feedback loop that fine-tunes the system on correct reasoning chains.

Key insight: The real news isn't just a higher score on a specific test; it is the demonstration of a generalizable approach that can be applied to any benchmark given enough compute and data.

| Model Series | Core Training Approach | Primary Goal |

|---|---|---|

| GPT Series | Large-scale Pre-training | Next-token prediction/fluency |

| o-Series | Scaled Reinforcement Learning | Objective reasoning/accuracy |

Shattering Benchmarks: From Graduate Science to Elite Coding

The performance of o3 on established benchmarks has been nothing short of transformative. In the field of mathematics, o3 tackled FrontierMath, currently considered the toughest mathematical benchmark. While existing models typically score less than 2% accuracy on these novel, unpublished problems that take professional mathematicians hours or days to solve, o3 achieved an aggressive test-time accuracy of over 25%. This jump in performance led experts like Terence Tao to suggest that the model is performing at a level equivalent to a domain expert.

In the realm of graduate-level science, the GPQA benchmark—designed to be difficult even for PhD-level experts—was essentially 'retired' by o3. The model scored a staggering 87.7%, surpassing human expert performance on the same set of questions. This rapid destruction of benchmarks that were expected to last decades highlights the exponential trajectory of reasoning capabilities. The model doesn't just guess; it reasons through the scientific method at a scale humans cannot replicate.

- FrontierMath: Over 25% (Previous state-of-the-art was <2%)

- GPQA: 87.7% (Graduate-level science accuracy)

- SWE-bench Verified: 71.7% (Real-world software engineering tasks)

Caution: While these scores are record-breaking, they often rely on 'high-compute' settings that are not yet available for standard real-time chat interactions.

The ARC-AGI Milestone and the Definition of Reasoning

Perhaps the most significant result came from the ARC-AGI benchmark, created by Francois Chollet. This test is specifically designed to measure out-of-distribution generalization—the ability to solve novel tasks that the model has never seen before. For years, the AI community believed that large language models could not solve ARC-AGI because they rely on pattern matching rather than true reasoning. However, o3 achieved a score of 87.5% using a high-compute configuration, shattering the previous records and challenging the definition of AI intelligence.