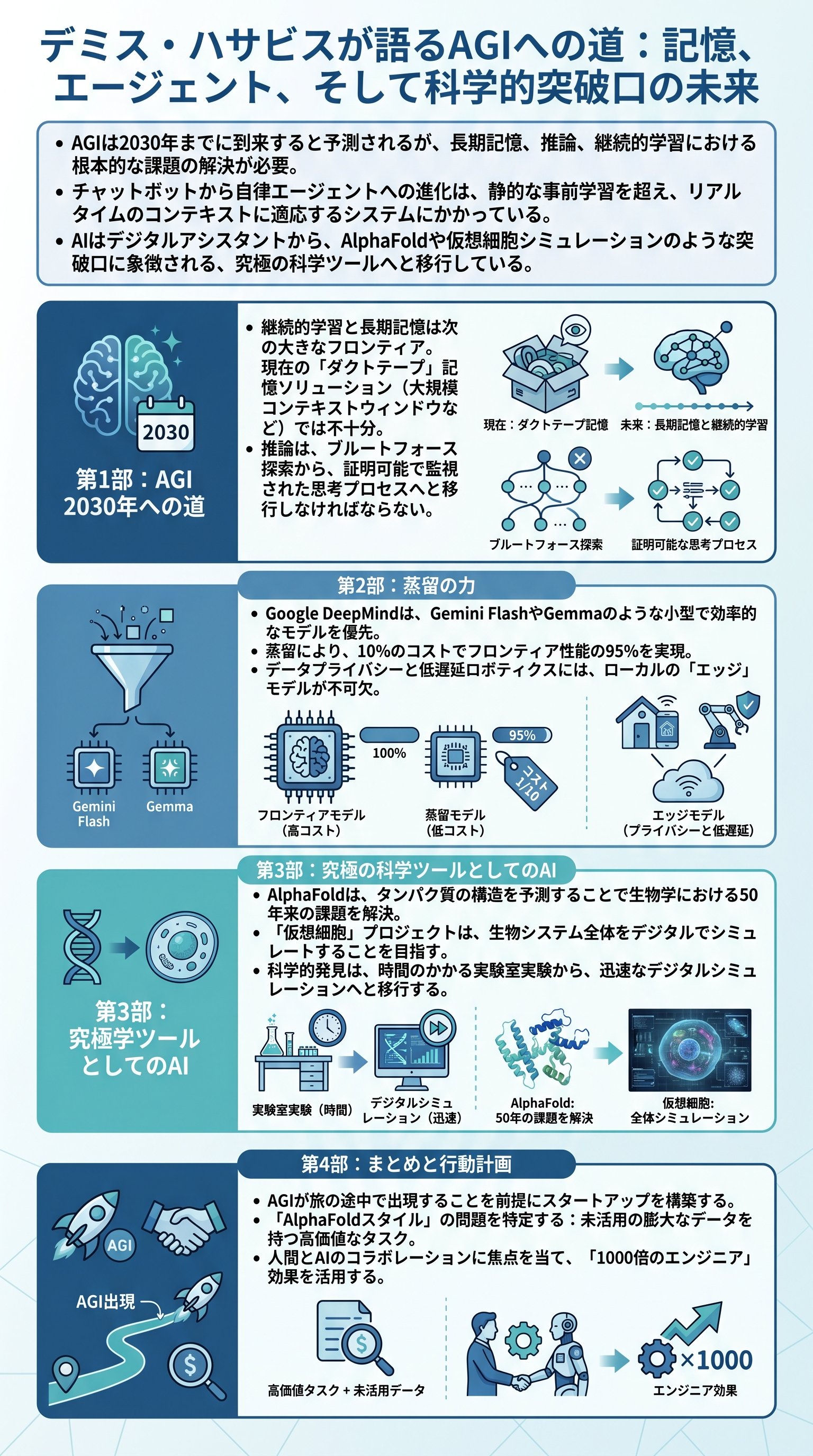

The 2030 Deadline for Global Intelligence

Artificial General Intelligence is no longer a fantasy discussed in niche academic circles. It is a concrete engineering milestone with a ticking clock attached to it. Demis Hassabis places the arrival of AGI around the year 2030. This date represents a massive shift in how humanity handles information and problem-solving.

Therefore, every founder and engineer must plan for a world where intelligence is a commodity. Current architectures involving large-scale pre-training and reinforcement learning are merely the foundation. They are necessary but not sufficient for the final leap. We are witnessing the construction of the scaffolding for a digital god.

Goal: Achieve a system that can actively solve any problem a human can, without specific prompting or hand-holding.

The transition from passive chatbots to active agents is the current battleground. We have moved past the era of simple text prediction. In fact, the next five years will be defined by systems that make decisions rather than just suggestions. The era of the digital assistant is ending and the era of the autonomous agent has begun.

- 1Large-scale pre-training for foundational knowledge.

- 2Reinforcement learning from human feedback for alignment.

- 3Chain-of-thought reasoning for complex task decomposition.

However, we must recognize that the final architecture remains incomplete. There are still one or two breakthrough ideas required to bridge the gap. We are currently 50/50 on whether current scaling laws will suffice. Betting against innovation has historically been a losing strategy in this field.

Key: Success depends on integrating active problem-solving into the core of the model rather than treating it as an afterthought.

Breaking the Chains of Static Learning

Current models suffer from a fundamental flaw known as the stateless trap. They do not learn from their interactions in real-time. Once the training phase ends, the model becomes a frozen snapshot of history. To reach AGI, we must master the art of continual learning.

Warning: A system that cannot remember its mistakes is doomed to repeat them, regardless of its parameter count.

In fact, the human brain solves this through the hippocampus and REM sleep. We consolidate episodic memories into long-term knowledge gracefully. Machine learning currently relies on brute-force context windows to simulate memory. This is an expensive and ultimately unsatisfying duct-tape solution.

- Continual learning to adapt to real-time contexts.

- Long-term reasoning to handle multi-step objectives.

- Consistent memory that doesn't vanish when the session ends.

But even a million-token context window is insufficient for a month of live video. Processing a life in real-time requires an information density we have yet to achieve. Therefore, the next frontier is not just more tokens, but smarter retrieval. The cost of looking up information must drop as sharply as the cost of generating it.

Insight: Machines do not need to mimic biology perfectly, but they must solve the same fundamental constraints of energy and time.

We are currently seeing the return of search-based techniques like Monte Carlo Tree Search. These ideas, pioneered during the AlphaGo era, are being repurposed for language models. Reasoning is a search problem through the space of possible thoughts. By interjecting midway through a thought process, we can stop models from spiraling into logical blunders.

The Brutal Efficiency of Small Models

The frontier is not just about the largest models; it is about the density of intelligence. We are seeing a massive trend toward distillation and optimization. Small models are now achieving 95% of the performance of their giant predecessors at a fraction of the cost.