The Disruption of the AI Status Quo: DeepSeek’s Cost-Effective Revolution

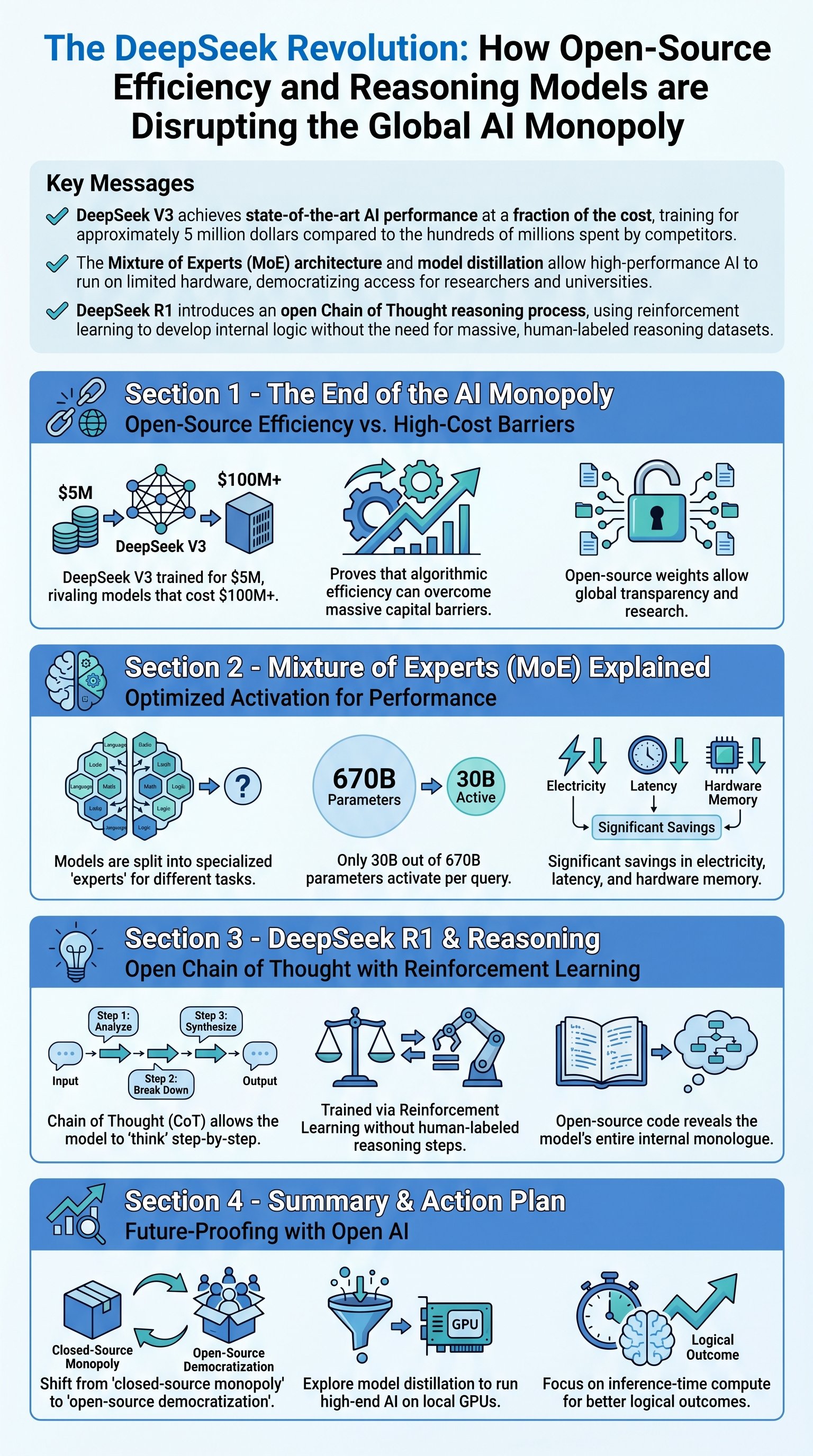

The artificial intelligence landscape has long been dominated by a handful of tech giants with nearly unlimited capital. However, the release of DeepSeek V3 and DeepSeek R1 has fundamentally challenged this monopoly. For years, the prevailing wisdom suggested that better AI required exponentially more data, more power, and billions of dollars in investment. DeepSeek, a Chinese research lab, has proven that algorithmic efficiency can achieve comparable results at a massive discount. While industry leaders like OpenAI or Meta might spend hundreds of millions of dollars on a single model's training, DeepSeek claims to have trained V3 for just 5 million dollars. This price gap is not just a minor improvement; it represents a paradigm shift in how we view the 'arms race' of silicon valley.

Key insight: The competitive advantage of sheer capital is eroding as algorithmic efficiency allows smaller players to produce world-class models for less than 5% of traditional costs.

Historically, the barrier to entry for high-end AI was the hardware. Training a large language model (LLM) required hundreds of thousands of high-end NVIDIA GPUs and a power budget capable of restarting nuclear plants. DeepSeek’s approach proves that by optimizing how the model handles mathematical computations and how it utilizes its parameters, the reliance on massive server farms can be mitigated. This level of transparency is rare in the current climate, where most companies keep their training methods as trade secrets. DeepSeek has not only released the weights of their models but also the papers detailing their methodology, providing a blueprint for the rest of the scientific community to follow.

| Feature | Traditional LLM Approach | DeepSeek Approach |

|---|---|---|

| Training Cost | $100M - $1B+ | Approximately $5M |

| Hardware Access | Massive private data centers | Accessible to universities/smaller labs |

| Model Architecture | Dense, fully-activated networks | Efficient Mixture of Experts (MoE) |

| Transparency | Closed-source / proprietary | Open-source weights and methodology |

Architectural Innovation: Mixture of Experts (MoE) Explained

To understand why DeepSeek is so efficient, we must look at its core architecture: the Mixture of Experts (MoE). In a traditional dense model, every single parameter is activated for every query you ask. If you ask a simple math question, the parts of the brain responsible for Shakespearean poetry are still firing, consuming energy and memory. This is fundamentally inefficient. DeepSeek V3 utilizes a system where the model is divided into specialized sub-networks, or 'experts.' When a prompt enters the system, a router determines which experts are best suited for the task. Instead of activating all 670 billion parameters, the system might only activate 30 billion parameters, drastically reducing the computational cost of inference.

- Router Efficiency: Early stages of the network direct the query to the specific expert.

- Lower Latency: Fewer active parameters mean faster response times for the user.

- Scalability: Different experts can be distributed across a data center and lie dormant when not needed.

Trend: The industry is moving away from 'one size fits all' dense models toward modular, expert-based architectures to save on electricity and hardware costs.

This efficiency extends to how the models are used by individual researchers. Because the model is open-source, it can undergo a process called distillation. In distillation, a massive model (like DeepSeek V3) acts as a teacher for a much smaller model (e.g., an 8-billion parameter model). The smaller model learns to mimic the outputs of the giant one, retaining much of the reasoning capability while being small enough to run on consumer-grade hardware like an NVIDIA 4090. This means that a student or a small startup can now have access to 'GPT-level' performance on their home computer, a feat that was unthinkable just a year ago.

DeepSeek R1: The Leap into Autonomous Reasoning

If V3 is the flagship for general tasks, DeepSeek R1 is the specialist for logic and problem-solving. R1 utilizes a technique called Chain of Thought (CoT) reasoning. Most LLMs are 'next-word predictors' that try to guess the entire answer in one shot. However, for complex math or logic problems, a one-shot answer is often incorrect because the model skips necessary intermediate steps. Chain of Thought forces the model to write out its internal monologue, solving the problem step-by-step before presenting the final result. This mirrors how a human might use a piece of paper to work through long division rather than doing it all in their head.