The Economic Engine Driving the Rise of AI Slop

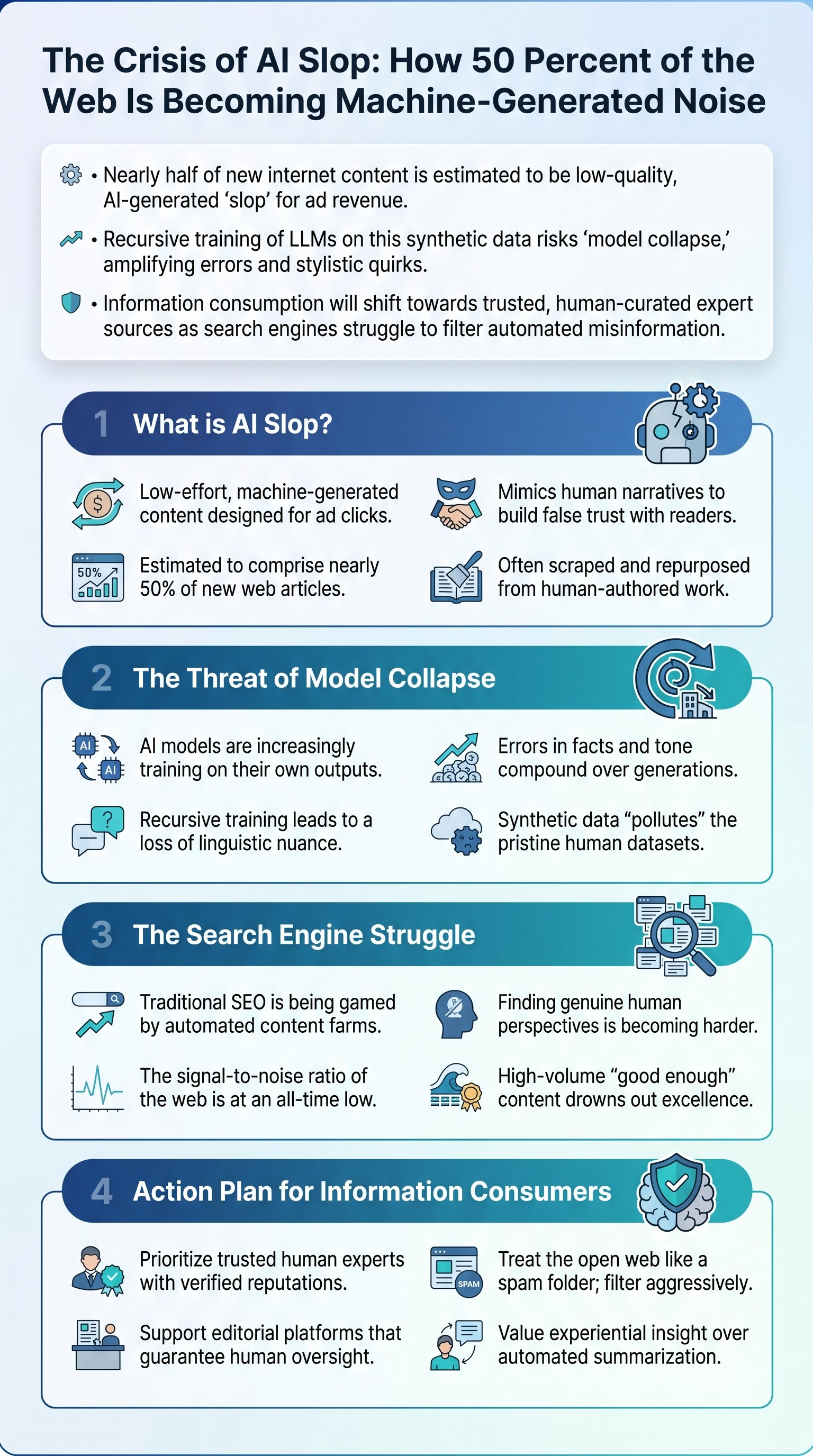

The digital landscape is currently undergoing a seismic shift as the volume of machine-generated content begins to outweigh human-authored material. Mike Pound from the University of Nottingham highlights a disturbing trend: researchers now estimate that approximately 50% of new articles appearing online are generated by artificial intelligence. This phenomenon, often referred to as 'AI slop,' is not merely a technological byproduct but a calculated economic strategy. The primary driver is the low-cost generation of content designed to capture search engine traffic and generate programmatic advertising revenue with almost zero human oversight.

Generating content at scale was once a resource-intensive endeavor requiring writers, editors, and subject matter experts. Today, large language models have reduced the cost of content production to near zero. A single operator can launch thousands of niche websites—ranging from cooking recipes to financial advice—without possessing any actual expertise in those fields. By feeding basic instructions into a chatbot, these 'content farms' can produce polished-looking narratives that mimic the tone of a human expert, complete with fictional anecdotes about grandparents passing down secret recipes to build unearned trust with the reader.

Key insight: AI slop is essentially digital junk mail, where the goal is not to inform the reader but to keep them on a page long enough to trigger an ad impression.

| Attribute | Human-Generated Content | AI Slop Content |

|---|---|---|

| Production Cost | High (Time/Labor) | Near Zero (Automated) |

| Primary Goal | Value/Communication | Ad Revenue/Traffic |

| Accuracy | High (Peer-reviewed) | Variable (Hallucination-prone) |

| Ethical Standing | High (Originality) | Low (Scraped/Derivative) |

This influx of automated content creates a parasitic relationship with the existing web. These systems often scrape high-quality human work, rewrite it using AI to avoid simple plagiarism detectors, and republish it under a different brand. While the individual quality may be low, the sheer volume ensures that a small percentage of users will land on these pages, providing enough revenue to sustain the operation. This creates an environment where information utility is sacrificed for automated profitability.

The 50 Percent Threshold and Information Saturation

The revelation that 50% of new content is AI-generated represents a tipping point for the internet as we know it. When the majority of the web is produced by machines, the signal-to-noise ratio drops precipitously. Mike Pound notes that we are now at a point where the internet is quickly becoming saturated by content that likely never had a human involved in its production process. This is not just a matter of aesthetic preference; it is a fundamental challenge to how we determine what is true or useful in a digital-first society.

Search engines, which have long served as the primary gateways to information, are struggling to keep pace with this automated flood. The traditional metrics used to rank pages—such as keyword density and link structures—are being manipulated by AI-optimized slop. As a result, users often find themselves navigating through layers of redundant, machine-written text before finding a genuine human perspective. This erosion of search quality forces a reassessment of how we discover reliable data and who we choose to trust in an era of infinite automation.

- The proliferation of fake news and political interference.

- The dilution of original creative work by derivative models.

- The increasing difficulty for search engines to provide 'correct' information.

- The rise of 'deepfake' narratives that simulate real-world events.

The internet is transitioning from a repository of human knowledge to a feedback loop of machine-generated echoes. This saturation makes it increasingly difficult for independent creators and legitimate experts to reach an audience. When a bot can generate 1,000 articles in the time it takes a human to write one well-researched piece, the market becomes flooded with 'good enough' content that drowns out excellence. This phenomenon is particularly dangerous in fields requiring high factual accuracy, such as medicine or legal advice.

Memo: The '50% rule' suggests that for every genuine article you read today, there is a statistical coin-flip chance that it was written by an algorithm with no understanding of reality.

The Model Collapse Threat: Training on Synthetic Data

Perhaps the most significant technical concern raised by Mike Pound is the impact of AI slop on the future of AI itself. Large language models (LLMs) are trained by scraping vast amounts of text from the internet. When that text is increasingly generated by AI, the models begin to train on their own outputs. This creates a recursive loop that researchers fear could lead to 'model collapse'—a state where the AI becomes less accurate, more repetitive, and prone to losing the nuance of human language.