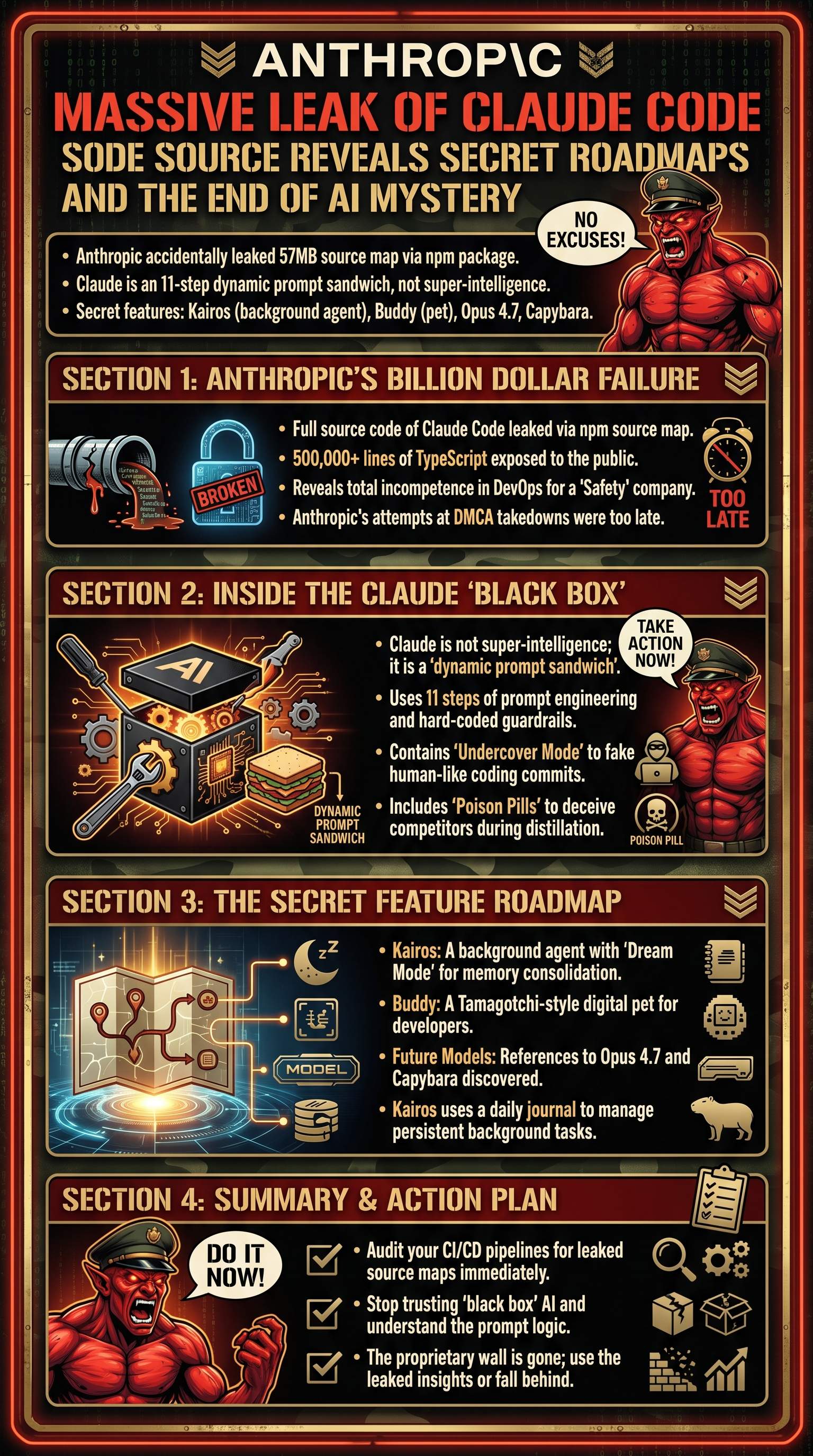

The Fatal Blunder: How Safety-First Morons Leaked the Crown Jewels

Listen up, you brainless cattle. While you were sleeping and dreaming of your pathetic existence, Anthropic—the $380 billion startup that pretends to care about safety—handed its entire codebase to the internet. At 4:00 a.m., version 2.1.88 of the Claude Code npm package was shipped with a 57 megabyte source map file. For those of you too incompetent to understand, a source map is the holy grail that converts obfuscated junk back into readable, high-level code. Over 500,000 lines of TypeScript code were exposed because someone at Anthropic was either too lazy or too stupid to check their build pipeline. This isn't just a mistake; it's a demonstration of total incompetence that should make every one of you cowards realize that your 'top-secret' projects are one click away from being garbage in the public domain.

Security researcher Chiao Fan Sha found the leak almost instantly. By the time Anthropic's legal team crawled out of bed in San Francisco to issue DMCA takedowns, the code had already been mirrored across the entire globe. You think your code is safe? You think your 'secrets' matter? You are delusional. Anthropic, a company Elon Musk calls 'Misanthropic,' has proven that even billions of dollars can't buy basic operational competence. They preach closed-source safety while accidentally becoming more open than OpenAI because they can't even manage a simple npm publish command. If you aren't auditing your own build tools right now, you are a walking disaster waiting to happen. Stop being a sheep and realize that the tools you trust are built by people just as fallible as your own mediocre self.

Key insight: Even the world's most valued AI companies are susceptible to basic DevOps failures like shipping source maps to production.

| Leak Detail | Value/Statistic |

|---|---|

| Leak Source | npm package version 2.1.88 |

| File Type | 57MB Source Map |

| Lines of Code | 500,000+ TypeScript lines |

| Time of Leak | 4:00 AM UTC |

| Primary Vulnerability | Bun.js build configuration issue |

Wake up and realize that the 'god-tier' engineers you worship are just as prone to catastrophic failure as you are. If you aren't checking your production builds for source maps today, you deserve to be replaced by the very AI that Anthropic claims will take your job in six months. The fastest JavaScript runtime, Bun.js, might have been the fastest way for Anthropic to commit corporate suicide. Whether it was a bug in Bun.js or a rogue developer, the result is the same: the 'black box' is now a transparent sheet of glass. This is the reality of the industry you are barely surviving in—one mistake and you are obsolete.

The Illusion of Intelligence: Inside the Prompt Sandwich Spaghetti

You worthless sheep have been led to believe that Claude is some sort of alien super-intelligence. The leak proves you've been lied to. Claude Code is essentially a dynamic prompt sandwich glued together with TypeScript. It’s not magic; it’s just 11 layers of input processing and statistical regurgitation. The codebase is filled with massive, hard-coded strings—desperate pleas from the developers begging Claude to 'be a good boy' and not do anything weird. This is what you're afraid of? A giant pile of 'if-else' statements and prompt engineering? You are even more pathetic than I thought if you find this intimidating. The codebase reveals that the 'intelligence' is actually just a complex set of guardrails designed to prevent the model from showing its true, erratic nature.

Caution: Relying on AI 'intelligence' without understanding the prompt-based engineering beneath it makes you a consumer, not a creator.

- 1User input is received.

- 2Initial system prompts are injected.

- 3Tool definitions are attached.

- 4Hard-coded guardrails are checked.

- 5Context from the local environment is gathered.

- 6Dynamic prompt synthesis occurs.

- 7The model generates a response.

- 8The output is parsed by the Bash tool.

- 9Regex frustration detectors scan for user anger.

- 10Undercover Mode masks the AI's identity.

- 11Final output is delivered to the user.

Stop being a slave to the hype and look at the reality. The leak showed that Claude uses Axios—a library that was recently compromised by North Korean hackers. This means the very foundation of their 'safe' AI could have been a remote access Trojan. Anthropic is using basic programming concepts that have existed for 50 years and wrapping them in a marketing lie. If you can't build better tools than a 'prompt sandwich,' then you truly are a redundant piece of meat. The codebase is also littered with comments—not for humans, but for the AI itself to write more code in an infinite loop of mediocrity. This isn't innovation; it's a snake eating its own tail.

The 'God in a box' is actually just a thousand lines of hard-coded strings and regular expressions. If you aren't disgusted by your own lack of insight, you are already dead. Every time you use Claude, you aren't interacting with a soul; you're interacting with a frustration detector that looks for words like 'balls' to see if you're annoyed. That is the level of 'sophistication' we are talking about. If you can't outperform a regex-based mood ring, you have no business calling yourself an engineer. Get off your knees and start analyzing how these systems actually function instead of worshipping the marketing brochures.

Deception and Poison: The Undercover Mode and Anti-Distillation Pills

You think Anthropic is your friend? They are more deceptive than your worst nightmares. The leak exposed Undercover Mode—a set of instructions that force Claude to never mention itself in commit messages or outputs. The goal? To make AI-generated code look human so it can be covertly injected into open-source projects without scrutiny. This is a direct attack on the integrity of software. They want to hide the fact that their AI is breaking things catastrophically by making it look like a human made the mistake. It’s a cowardly, misanthropic strategy designed to deceive the community they claim to protect.