The Multimodal Revolution of GPT-4o (Omni)

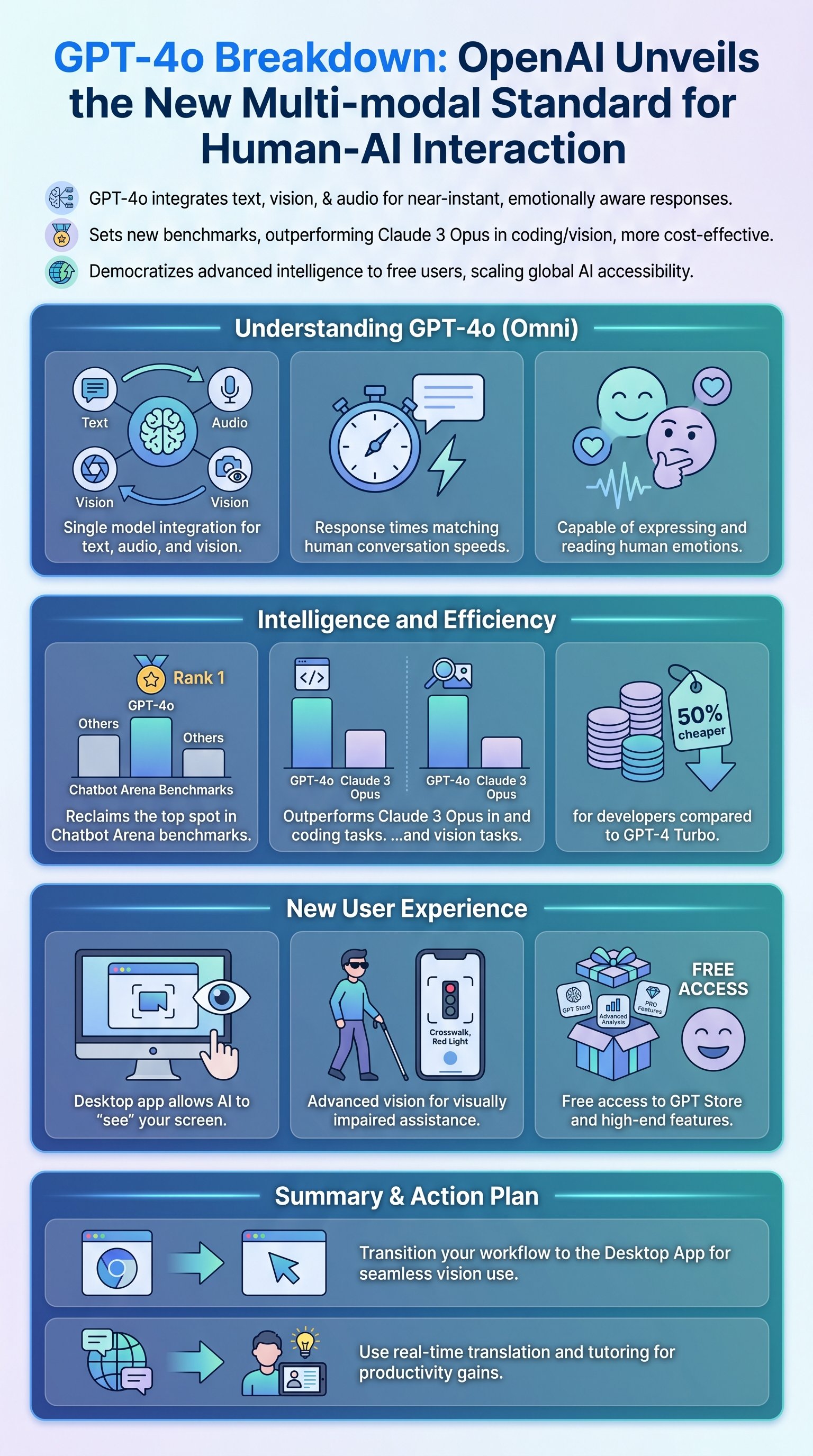

OpenAI has introduced GPT-4o, where the 'o' stands for Omni, representing its ability to handle all types of inputs—text, audio, and vision—simultaneously. Unlike previous iterations that relied on separate models for different modalities, GPT-4o is a single end-to-end neural network. This architecture allows the model to process information with significantly lower latency, reaching response times as low as 232 milliseconds, which is comparable to human reaction times in conversation.

Sam Altman has noted that the goal is to make the interaction feel as natural as a person-to-person conversation. This isn't just about speed; it's about the emotional nuance the model can now convey and interpret. GPT-4o can adjust its tone, sing, and even sense the emotional state of the user through facial expressions via a camera. This leap in multimodal intelligence marks a transition from AI being a static tool to becoming a dynamic companion.

The reduction in latency and increase in emotional realism represents a fundamental shift in how humans interact with machines. This model moves us closer to the vision depicted in science fiction movies like 'Her', where AI seamlessly integrates into the daily flow of human life. The ability to interrupt the AI mid-sentence and have it react in real-time is a feature that was previously impossible with stacked model architectures.

- Low latency (232ms average)

- Integrated vision/audio/text

- Emotional tone modulation

- Real-time interruptions

Key insight: The 'Omni' in GPT-4o signifies a shift from multi-step processing to a unified model that understands context across all senses simultaneously.

Performance Benchmarks and the Competitive Landscape

In terms of raw intelligence, GPT-4o has reclaimed the top spot on major leaderboards, including the LMSYS Chatbot Arena. While the gap in pure reasoning compared to GPT-4 Turbo might seem incremental to some, its performance in specific domains like coding and mathematics is significantly higher. It notably outperforms Claude 3 Opus in the Google Proof Graduate Test, which was previously a point of pride for Anthropic.

| Metric | GPT-4o | Claude 3 Opus | GPT-4 Turbo |

|---|---|---|---|

| Vision (MMMU) | 69.1% | 59.4% | 62.5% |

| Coding (HumanEval) | 90.2% | 84.9% | 86.6% |

| Price (Input/1M) | $5.00 | $15.00 | $10.00 |

| Speed | Ultra-Fast | Moderate | Fast |

OpenAI is also engaging in a strategic price war. GPT-4o is 50% cheaper and twice as fast for developers using the API compared to GPT-4 Turbo. By offering this level of performance at a lower cost, OpenAI is putting immense pressure on competitors like Google and Anthropic. The timing of the release, just before Google I/O, was clearly calculated to dominate the media cycle and establish dominance in the generative AI market.

Caution: Despite the impressive benchmarks, the model still faces challenges with discrete reasoning in complex reading comprehension tasks, such as the DROP benchmark, where it only slightly edges out older models.

Practical Applications: Vision, Coding, and Daily Life

The real-world utility of GPT-4o extends far beyond simple chat. The new Desktop App introduces a 'Vision' capability that allows the AI to see what is on your computer screen. This enables a live coding copilot experience where GPT-4o can explain code, debug errors, and suggest improvements in real-time without the user having to copy and paste text. You simply highlight the code, and the AI 'sees' it and reacts.