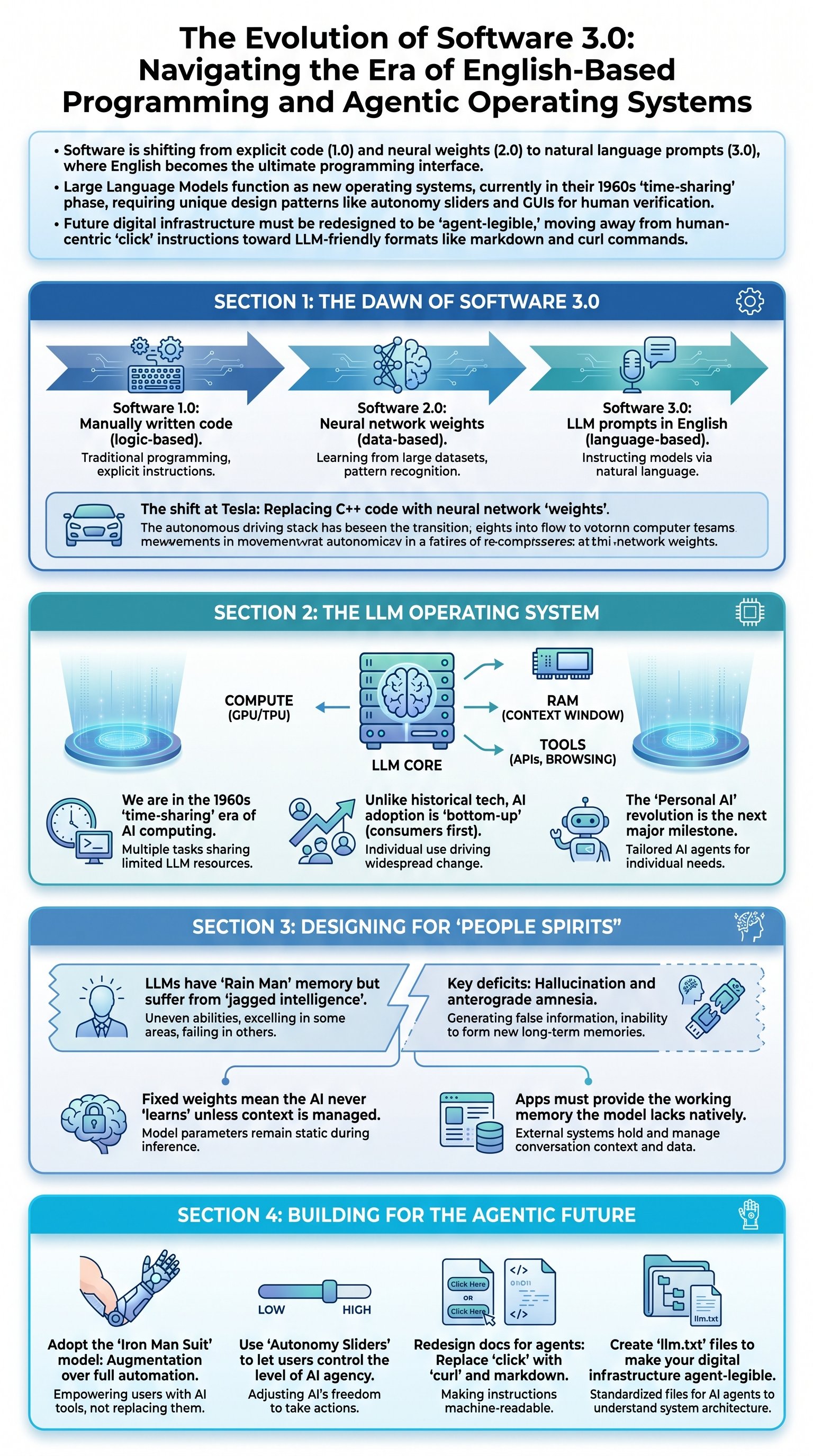

The Three Epochs of Computing: From Logic to English

Software has fundamentally changed for the first time in nearly 70 years, evolving through three distinct paradigms. Software 1.0 consists of the traditional code we write—C++, Python, or Java—where a human provides explicit instructions for the computer to follow. This era focused on logic and deterministic rules. However, several years ago, we witnessed the rise of Software 2.0, defined by neural network weights. In this phase, developers stopped writing logic directly and instead became curators of data, using optimizers to 'bake' intelligence into parameters. At Tesla, this transition was literal: as the Autopilot system improved, thousands of lines of C++ code were deleted and replaced by neural networks that handled complex tasks like image stitching across cameras.

Now, we are entering the era of Software 3.0. In this new paradigm, neural networks have become programmable through Large Language Models (LLMs). Remarkably, the programming language for this new computer is English. This shift democratizes software development, turning every person who can speak a natural language into a potential programmer. This is not just a change in syntax; it is a fundamental shift in how we interact with the digital world, moving from rigid code to flexible, stochastic simulations of human intelligence.

Key insight: Software 3.0 is not just about using AI as a tool; it is about treating the LLM as a programmable engine where the prompt is the source code.

| Feature | Software 1.0 | Software 2.0 | Software 3.0 |

|---|---|---|---|

| Core Component | Explicit Code (C++, etc.) | Neural Weights | English Prompts |

| Primary Activity | Writing Logic | Data Engineering | Orchestrating Agents |

| Human Role | Logical Architect | Data Curator | Director/Verifier |

The LLM as the Modern Operating System

To understand the current state of AI, we must stop viewing LLMs as simple chatbots and start seeing them as operating systems (OS). Just as a traditional CPU manages compute and RAM, the LLM orchestrates memory through its context window and solves problems using various tools. However, we are currently in the '1960s era' of this new OS. Because LLM compute is incredibly expensive, the infrastructure is centralized in the cloud, and we interact with it through 'time-sharing'—much like the massive mainframes of the mid-20th century. The personal computing revolution for LLMs has not yet happened because local execution is not yet economically viable for most users.

This new OS also flips the traditional model of technology diffusion. Historically, transformative technologies like GPS or cryptography were first adopted by governments and corporations before reaching consumers. With LLMs, the opposite is true. Individuals are using AI to help them 'boil an egg' or write simple emails, while large organizations and governments are lagging behind. This bottom-up adoption means the most innovative software applications are being built by individuals and small startups, not centralized powers.

Trend: The 'Personal LLM' revolution is coming, but for now, we are all 'thin clients' connected to a centralized intelligence grid.

- Cloud Centralization: Training and inference remain capital-intensive, requiring massive GPUs.

- Universal Access: LLMs are 'beamed' to billions of devices simultaneously through browser interfaces.

- Natural Interface: The 'terminal' for this new OS is a simple text box.

Designing for the 'People Spirit' Psychology

Designing apps for LLMs requires understanding their unique 'psychology.' LLMs are essentially stochastic simulations of human thought—what can be described as 'people spirits.' They possess superhuman encyclopedic memory, akin to the character in the film 'Rain Man,' yet they suffer from significant cognitive deficits. They can recall complex SHA hashes instantly but might fail at simple logic, such as determining if 9.11 is larger than 9.9 or counting the number of 'r' letters in the word 'strawberry.' These 'jagged intelligence' profiles mean that LLM apps must be designed to leverage their strengths while mitigating their weaknesses.