The Anatomy of the AI Financial Ecosystem and the Scale of Investment

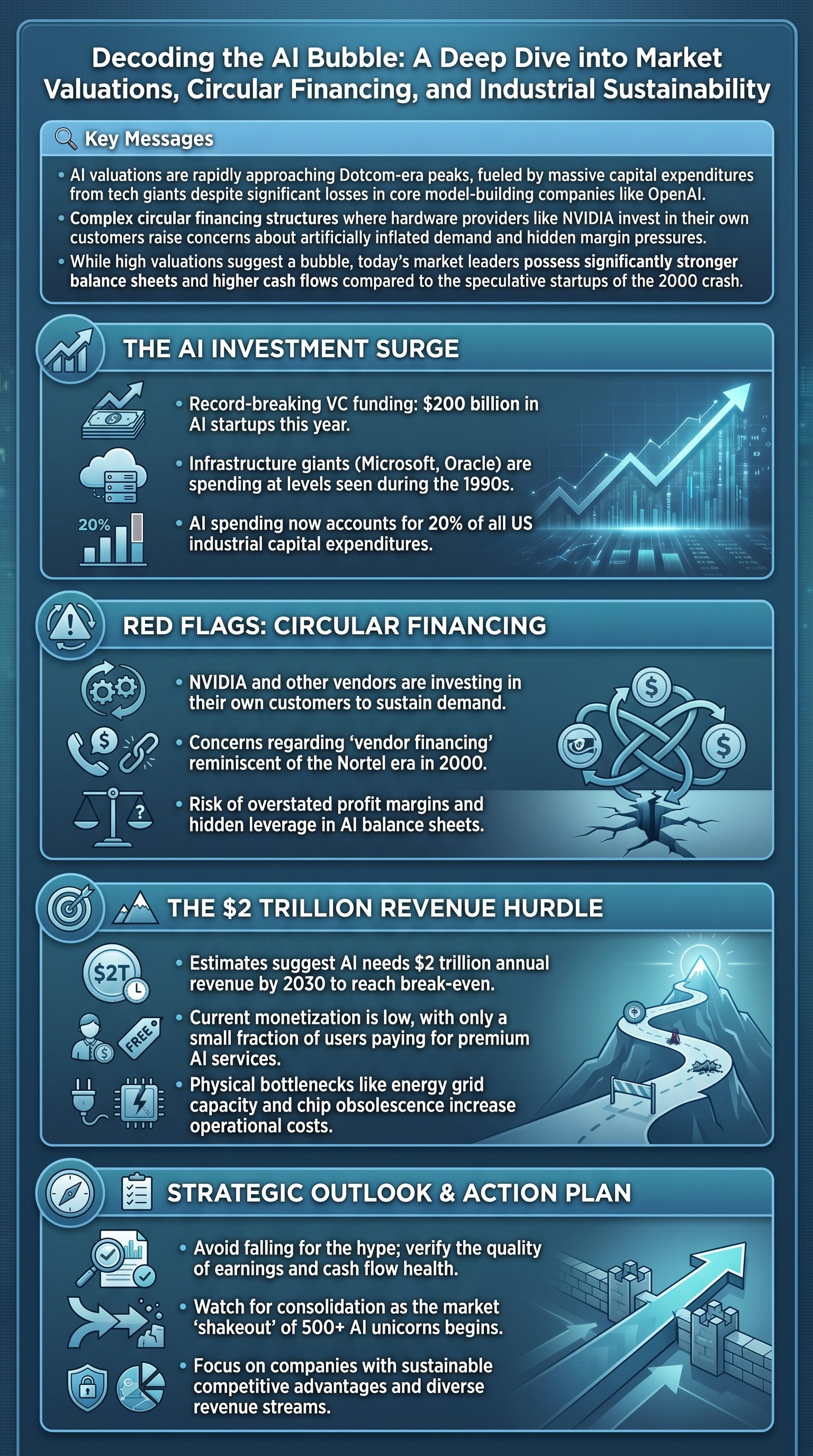

The current trajectory of artificial intelligence has moved beyond mere technological curiosity into a massive industrial buildout that rivals the greatest infrastructure projects in history. Three years since the launch of ChatGPT, the landscape has shifted from experimental software to a capital-intensive arms race. Leading the charge are the AI chip companies, most notably NVIDIA and AMD, who provide the specialized hardware necessary for model training. These firms have seen their market capitalizations skyrocket, with NVIDIA briefly becoming the world’s most valuable company. Supporting this are the infrastructure providers—Amazon (AWS), Microsoft (Azure), and Oracle—who invest billions in data centers to rent compute power to the next tier of the ecosystem.

At the peak of this structure are the model developers like OpenAI, Anthropic, and xAI. While these companies are the faces of the revolution, they are currently operating as massive "money furnaces." For instance, OpenAI is projected to lose $8.5 billion in 2025 alone, with estimated total spending reaching $115 billion by 2029. This aggressive spending is facilitated by the belief that being the first to achieve Artificial General Intelligence (AGI) will yield returns that dwarf these initial costs. However, the sheer volume of capital being deployed is staggering, with AI industry spending reaching nearly 20% of the total capital expenditures across all US industries.

Key insight: The AI boom is currently a supply-side phenomenon where infrastructure is being built at a scale that assumes future demand will materialize at an unprecedented rate.

| Player Category | Primary Role | Key Examples | Financial Status |

|---|---|---|---|

| Hardware Providers | Sell chips and GPUs | NVIDIA, AMD | Highly Profitable |

| Infrastructure | Data centers and cloud | Microsoft, Oracle | Strong Balance Sheets |

| Model Developers | Create AI software | OpenAI, Anthropic | High Cash Burn |

Despite the excitement, voices of caution are growing louder. Michael Burry, famous for his 2008 housing market short, has recently taken positions against key AI firms. Furthermore, organizations like the International Monetary Fund (IMF) and the Bank of England have issued warnings regarding soaring valuations. The core concern is whether the revenue generated by AI applications can ever justify the $7 trillion in capital expenditures estimated by firms like McKinsey for the next five-year cycle.

The Circular Financing Dilemma and Vendor Risks

One of the most controversial aspects of the current AI market is the emergence of circular financing deals. This occurs when a hardware vendor, such as NVIDIA, invests capital into a startup or data center provider, which then uses that exact capital to purchase chips from the vendor. While not illegal, this practice can lead to "propped-up" demand and may overstate a company's true profit margins. By investing in its own customers, a company effectively discounts its products without reflecting that hit in its operating margins, as investments are accounted for differently than revenue costs.

These arrangements draw haunting parallels to the vendor financing seen with companies like Nortel during the twilight of the Dotcom bubble. Critics argue that if the end-user demand for AI does not grow fast enough, the entire circle could collapse. We are already seeing AI companies borrow billions of dollars using their existing chip inventory as collateral for more loans. This leverage adds a layer of systemic risk to the industry, where the failure of one major node could trigger a cascade of defaults across the financing chain.

Caution: Circular financing can mask the true health of a market by creating a feedback loop of artificial demand that is unsustainable without external revenue.

- NVIDIA has pledged billions to OpenAI while OpenAI remains a primary chip customer.

- Microsoft acts as both a lead investor and the exclusive cloud provider for OpenAI.

- Oracle receives massive compute orders from companies that NVIDIA (a chip supplier) has partially funded.

The risk here is not just overvaluation, but the potential for a liquidity crisis if investors stop funding the 'burn' before customers start paying the 'bill'. Currently, only about 6% of ChatGPT’s 800 million weekly active users are estimated to be paying subscribers. This gap between usage and monetization is a fundamental hurdle that the industry must clear to avoid a major correction. Even if the technology is revolutionary, the business models supporting it must eventually become self-sustaining.

Physical Bottlenecks and the Reality of Scalability

Beyond the financial metrics, the AI revolution faces significant physical constraints that are often overlooked by equity markets. The most pressing bottleneck is electricity. Training and running modern LLMs (Large Language Models) requires immense power; OpenAI alone plans to utilize enough electricity to power 26 million households. This demand is clashing with an aging electrical grid and regulatory hurdles. In the US, the Nuclear Regulatory Commission (NRC) application process for new power sources can take five years, followed by another five or more years for construction.