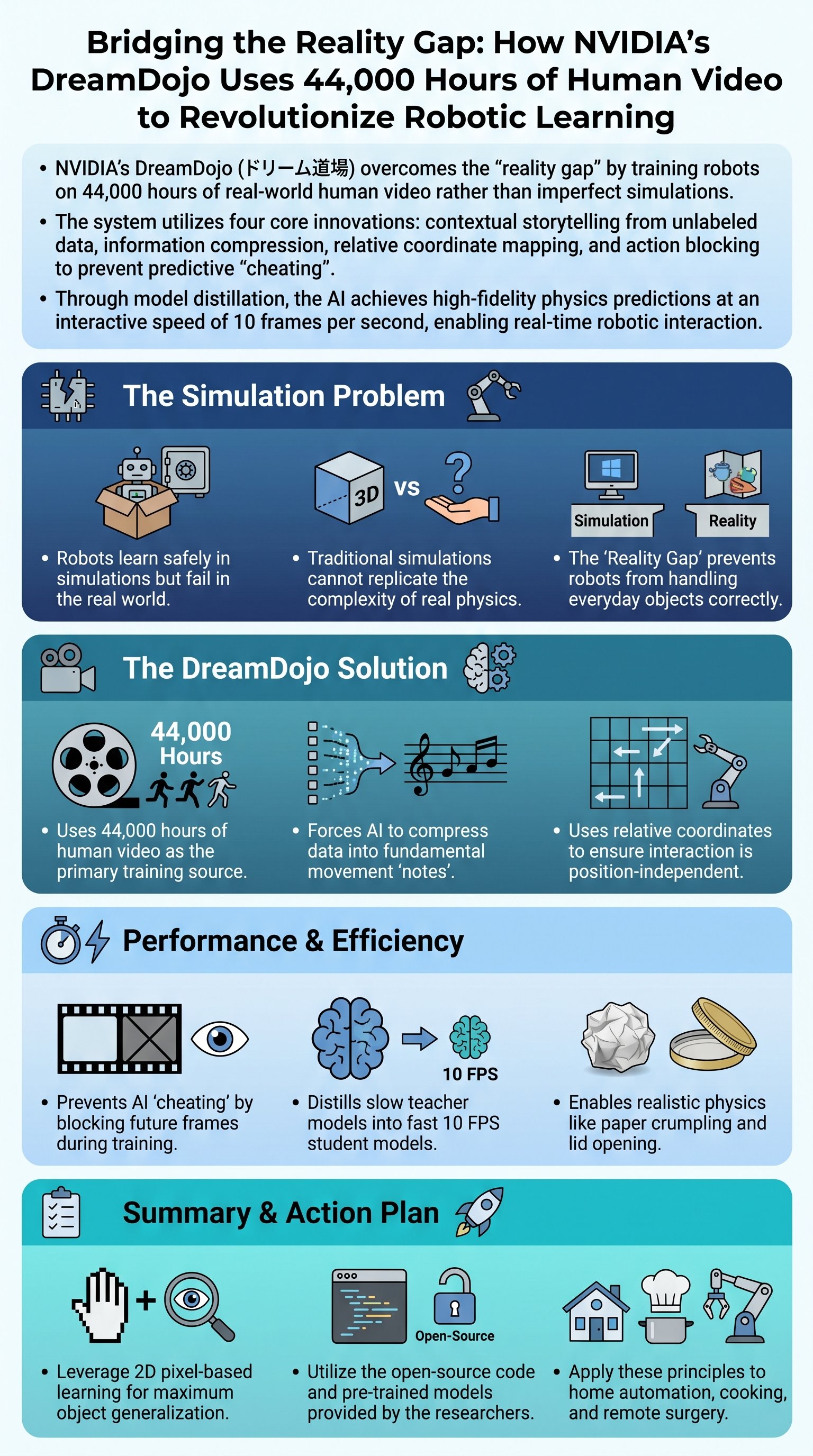

The Death of Robotic Delusion

Robots are currently living in a dream world that ends the moment they touch real metal. Simulations are a lie that researchers tell themselves to feel productive and safe. The "reality gap" is the graveyard where most ambitious robotics projects go to die. NVIDIA decided to stop lying and started watching the real world. They fed a neural network 44,000 hours of human life to see if it could finally learn common sense.

Goal: Bridge the gap between digital perfection and physical chaos using raw data.

This is not just a collection of clips; it is a colossal archive of cause and effect captured in 2D. We are witnessing the end of hard-coded robotic behavior and the rise of learned physical intuition. In the past, we put robots in video games and hoped they would learn to walk. Now, we force them to observe the complexity of human movement before they ever take a step.

Warning: Simulations often mimic reality but they are never a substitute for it.

The results of training in a sterile digital vacuum are always a huge disappointment when applied to the street. Something that worked perfectly in a game engine suddenly fails because a shadow moved. DreamDojo ignores the simulation and focuses on the messy, unpredictable nature of actual human existence. This is the only way to build a machine that can survive outside of a laboratory.

Decoding the Human Action Soup

Raw video is a useless soup of pixels without the right interpretation layer. Humans have different joints, different hands, and different ways of moving than any machine. DreamDojo forces the AI to create its own stories about what it sees in those four billion frames. It does not need a label to know that a hand moving toward a cup means an intent to grab.

Key: The AI compresses massive datasets into fundamental "notes" of motion.

- 1Contextual storytelling derived from unlabeled footage.

- 2Massive information compression into manageable fundamental scales.

- 3Relative coordinate mapping instead of absolute geometric math.

- 4Action blocking to ensure the machine learns genuine causation.

Absolute coordinates are the enemy of general intelligence. If you move a cup three inches, a robot trained on global coordinates becomes completely blind and useless. A robot must know where the knife is relative to the carrot, not its position in the room. This shift allows the AI to generalize across thousands of objects it has never encountered before.

Note: Compression forces the AI to focus only on the most critical information.

By ignoring the quadrillion pixels of background noise, the AI identifies the essential physics of the task. It learns the "grammar" of movement rather than memorizing a specific path. This is the most significant breakthrough in making robots adaptable to any kitchen or workshop. We are finally moving away from fragile automation toward robust, flexible intelligence.

Stop Peeking at the Answers

Predicting the future is easy if you are allowed to cheat like a lazy student. Most AI models look at the final result and pretend they predicted it all along. DreamDojo solves this by feeding actions in small blocks of four at a time to prevent peeking. This forces the neural network to actually understand the consequences of its movements.