The Scaling Myth: Why More Data Does Not Guarantee Intelligence

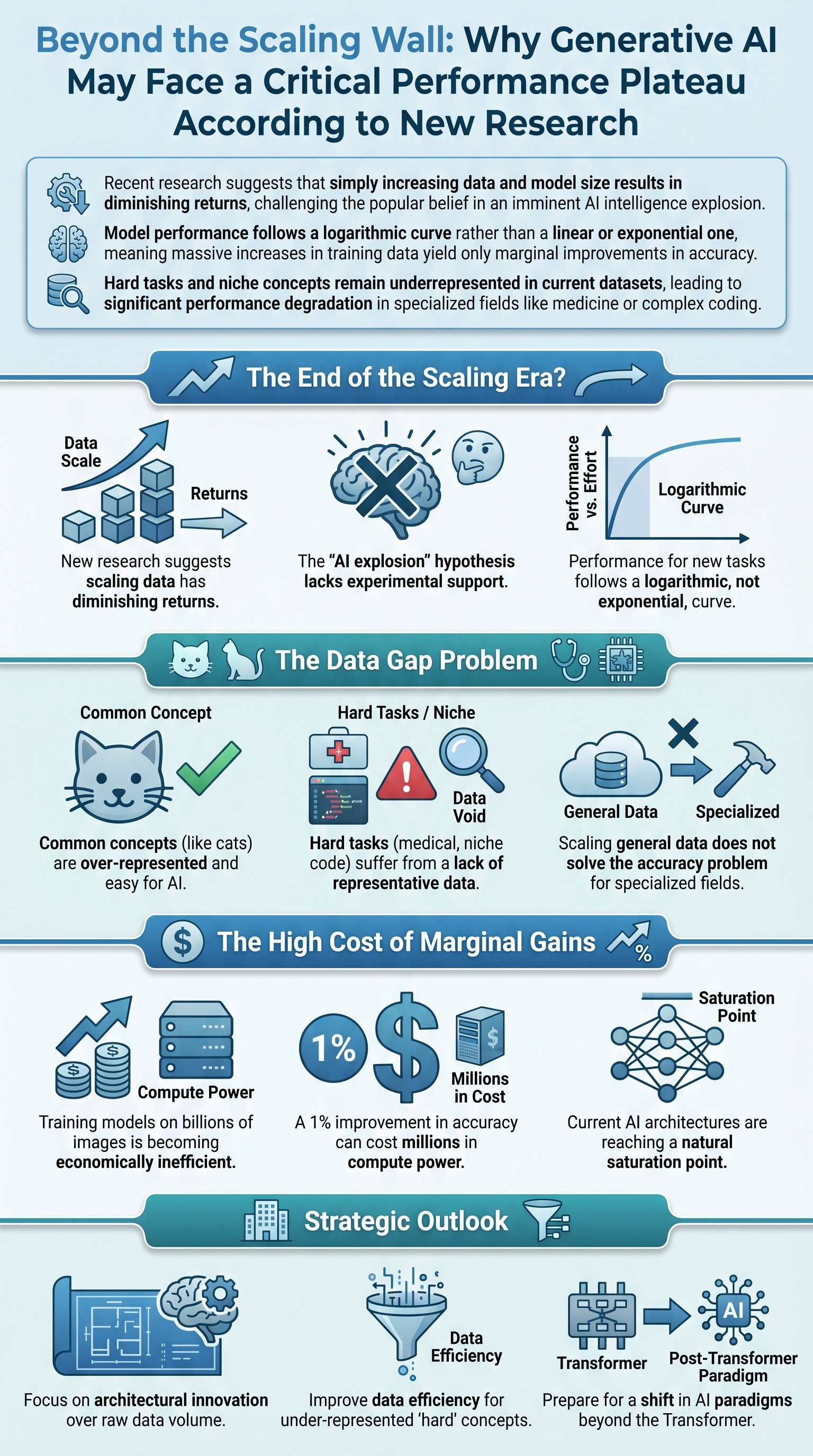

For the past few years, the dominant narrative in the technology sector has been that Artificial Intelligence is on an unstoppable upward trajectory. The prevailing logic suggests that by simply feeding larger Vision Transformers and language models more data, we will eventually achieve a form of general intelligence. Dr. Mike Pound from Computerphile examines a recent research paper that challenges this 'scaling law' optimism. The paper argues that the amount of data required to achieve high-level, zero-shot performance on new tasks is becoming astronomically vast, to the point of being practically unattainable.

While big tech companies often promote the idea that showing a model enough 'cats and dogs' will eventually allow it to understand the nuances of an 'elephant' or any other concept, scientific evidence suggests otherwise. We are moving past the era of mere hypothesis and into a phase of experimental justification. The data indicates that the path to truly effective AI across all domains is not as simple as increasing GPU count or scraping more of the internet. There is a fundamental limit to what can be distilled from existing datasets.

Key insight: The 'AI explosion' theory assumes an exponential growth in capability, but actual performance data suggests we are hitting a ceiling where the cost of training outweighs the marginal gains in intelligence.

Historically, models like CLIP (Contrastive Language-Image Pre-training) have been used to bridge the gap between visual and textual understanding. By training on pairs of images and text, these models learn a shared numerical fingerprint for meaning. However, applying these systems to 'downstream tasks' like classification or recommendation systems reveals a stark reality: they struggle immensely with difficult or specialized problems without specialized data backing them up.

| Scenario | Expected Outcome | Actual Research Finding |

|---|---|---|

| Data Scaling | Exponential intelligence growth | Logarithmic diminishing returns |

| Task Performance | Mastery of niche subjects | Significant degradation in accuracy |

| Training Cost | Justifiable for ROI | Millions spent for 1% improvement |

The Logarithmic Reality of Model Performance

To visualize the current state of AI development, we can look at the relationship between the number of training examples and actual task performance. In an ideal world, this would be a steep, upward-trending line. However, the paper discussed by Dr. Mike Pound provides extensive evidence that the trend is logarithmic. This means that after an initial burst of improvement, the performance curve flattens out significantly. Even as you double or triple the data, the accuracy gains become smaller and smaller until they are almost imperceptible.

This plateau is not just a minor hurdle; it is a fundamental characteristic of the current Transformer-based architecture. Whether it is image recall, classification, or generative prompts, the majority of models show this same frustrating pattern. It suggests that our current methods of representing data may be reaching their natural limit. If we want to reach a higher level of performance, simply adding more of the same data is unlikely to be the solution.

Caution: Relying solely on scaling current architectures might lead to a 'dead end' where training costs become economically unsustainable for the minimal intelligence boosts achieved.

We are reaching a point where doubling the dataset size to 10 billion images might only result in a 1% increase in accuracy. For a business or a research institution, this raises a critical question: is it worth spending millions of dollars for such a negligible return? This pragmatic interpretation of AI progress suggests that the industry may soon be forced to look beyond the Transformer model for the next big breakthrough.

- Performance starts strong with basic concepts

- Scaling data leads to diminishing returns

- Training costs increase exponentially as gains flatten

- Current architectures are reaching a saturation point

The Challenge of Hard Tasks and Data Representation

One of the most significant issues identified in the research is the uneven distribution of concepts within training datasets. Common objects like 'cats' are over-represented by orders of magnitude, which is why AI models are so proficient at identifying or generating them. However, when you move to 'hard tasks'—such as identifying specific species of trees or diagnosing rare medical conditions—the performance drops off a cliff. These niche concepts are under-represented in the general internet data used for training.