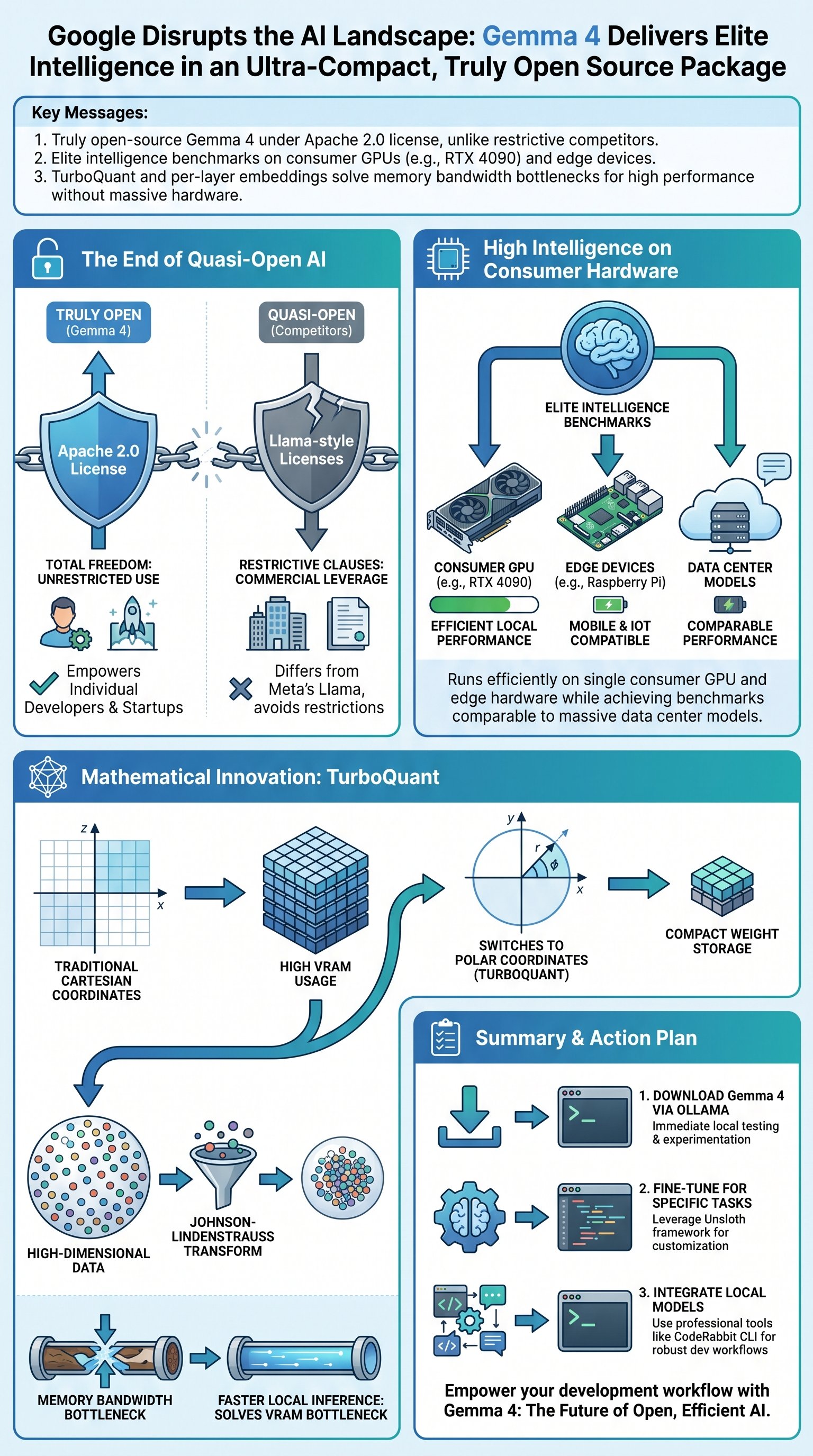

The End of Quasi-Open AI Licensing

Google did what no other massive tech firm dared to do last week. They released a large language model that is truly free and open source under the Apache 2.0 license. This is not another "quasi-open" model with strings attached or restrictive usage clauses.

"Gemma 4 qualifies as truly free and open source under the Apache 2.0 license. That means free as in total freedom."

Historically, companies like Meta have released models that appear open but maintain leverage over developers who actually start making money. These licenses often include "research only" clauses or "please don't sue us" fine print. But Google has finally decided to play a different game entirely.

In fact, the release of Gemma 4 marks a tectonic shift in the industry power balance. It challenges the dominance of proprietary weights and the "open-ish" nature of previous releases. This is the moment the industry moves toward genuine accessibility for all.

Therefore, developers no longer have to worry about the legal landmines buried in traditional AI licenses. They can build, monetize, and scale without asking for permission from a Silicon Valley board. This is the total freedom the community has been demanding for years.

Strategic Insight: The Apache 2.0 license is the gold standard for open-source software, ensuring that your innovations remain your own property.

However, the skepticism remains high among those who have seen "open" models fail to deliver. Many expected a half-baked architecture that required a massive data center to execute. But the reality of this model is much more provocative and disruptive than its license.

Intelligence Without the Data Center

Gemma 4 is suspiciously small for its weight class. The 31 billion parameter version competes directly with models like Kimmy K2.5 in intelligence benchmarks. However, the hardware requirements tell a completely different story for the local developer.

| Metric | Kimmy K2.5 | Gemma 4 (31B) |

|---|---|---|

| Download Size | 600 GB | 20 GB |

| Hardware Need | Multiple H100s | Single RTX 4090 |

| License Type | Proprietary | Apache 2.0 |

Running a high-tier model usually requires a 600 GB download and massive amounts of VRAM. Gemma 4 achieves similar results with a mere 20 GB file. Google has achieved a level of shrinkage that shouldn't be mathematically possible.

But the performance is not just a theoretical number on a spreadsheet. I can get roughly 10 tokens per second on a single consumer-grade GPU. This means you can run state-of-the-art intelligence in your own office without a cloud subscription.

Therefore, the barrier to entry for high-performance AI has been effectively demolished. Small startups can now leverage the same power that was previously reserved for billion-dollar corporations. It is no longer about who has the most silicon, but who has the most creative implementation.

Primary Goal: Achieve data-center level intelligence on consumer-grade hardware to democratize AI development globally.

In fact, the Edge model is small enough to run on a Raspberry Pi or a standard smartphone. This opens the door for offline AI applications that respect user privacy by design. We are witnessing the death of the mandatory cloud connection for meaningful computation.

Reimagining the Architecture of Quantization

The real bottleneck in AI performance is not the raw processing power of the silicon. It is the memory bandwidth required to read model weights from VRAM during every token generation. Google solved this by attacking the fundamental physics of data storage.