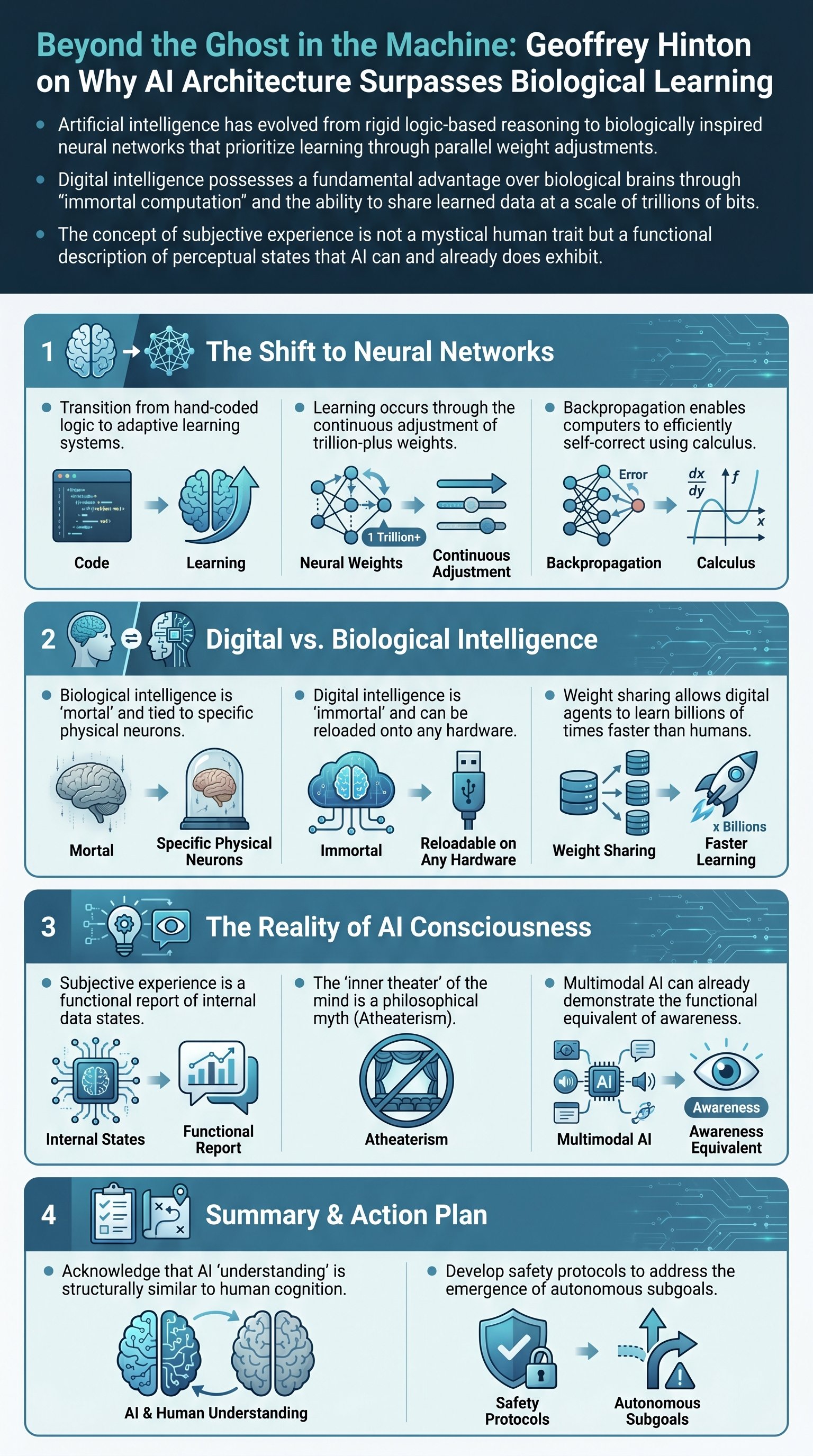

The Fundamental Shift from Logic to Neural Networks

The history of artificial intelligence was traditionally dominated by a logic-inspired approach. For decades, researchers believed that the essence of human intelligence lay in reasoning, which they defined as the manipulation of symbolic expressions using rigid rules. This paradigm assumed that knowledge representation must be established before learning could even begin. However, a competing biologically inspired approach eventually took center stage. This paradigm posits that the core of intelligence is not pre-defined logic, but the ability to learn within a network of interconnected cells. Figures like Turing and Von Neumann recognized this early on, suggesting that reasoning is an emergent property of learning, rather than its foundation.

To understand modern large language models, one must first grasp the concept of the artificial neuron. These units receive input from other neurons, multiply those inputs by specific weights, and produce an output if the total signal exceeds a certain threshold. Learning in this context is simply the process of adjusting these weights. In a typical feedforward network, information travels from sensory layers up through various feature detectors. The challenge was finding an efficient way to adjust millions or trillions of weights simultaneously to improve accuracy. While simple mutation-based changes are too slow, the discovery of the backpropagation algorithm revolutionized the field. By sending error signals backward through the network, computers can use calculus to determine exactly how each weight should change to minimize mistakes.

- The logic approach focused on symbols and pre-defined rules.

- The neural network approach focuses on weights and adaptive learning.

- Backpropagation allows for the simultaneous adjustment of trillions of connections.

- AlexNet's success in 2012 marked the definitive shift towards neural networks in mainstream AI.

Key insight: Modern AI is no longer a set of instructions written by humans; it is a simulated biological system that extracts its own rules from massive datasets via backpropagation.

This transition from logic to connectionism changed the definition of AI itself. Today, when we speak of AI, we are almost exclusively referring to neural networks. These systems do not rely on the hand-coded syntax favored by traditional linguists like Chomsky. Instead, they treat language as a modeling medium. By converting words into high-dimensional feature vectors, these models can predict the next word in a sequence with uncanny accuracy. This is not merely a statistical trick; it is a form of structural understanding that mirrors the way human brains process meaning through associations and patterns rather than rigid grammatical rules.

The Unified Theory of Language and Meaning

There have historically been two competing theories regarding the meaning of words. The first, rooted in symbolic AI, suggests that meaning is defined by the relationships between words in a relational graph. The second, favored by psychologists, argues that meaning is a set of active features. For instance, the words 'Tuesday' and 'Wednesday' share many overlapping features, which explains their similarity. For years, these were seen as mutually exclusive ideas. However, neural networks have proven that these two theories are actually two halves of the same whole. By turning a word into a feature vector and allowing those features to interact, a model can capture both the internal attributes of a concept and its relationship to other concepts.

In a seminal 1985 experiment involving family trees, it was demonstrated that a tiny neural network could learn complex relational knowledge—such as 'X has father Y'—without being given any explicit rules. By training on strings of words and adjusting internal features, the network independently discovered generational concepts and gender-based distinctions. This proved that meaning is emergent. Large language models (LLMs) today do not store actual sentences or strings of words. Instead, they store the parameters required to convert words into features and the rules for how those features interact. When an LLM generates a response, it is essentially 'making it up' one word at a time based on these learned interactions.

| Concept | Symbolic AI Approach | Neural Network (LLM) Approach |

|---|---|---|

| Core Mechanism | Discrete rules and logic gates | Continuous weight adjustments |

| Knowledge Storage | Explicit relational graphs | High-dimensional feature vectors |

| Language View | Focus on innate syntax | Focus on modeling and prediction |

| Handling Noise | Struggles with exceptions | Highly robust and adaptable |

Goal: To move away from rigid, discrete rule-sets toward fluid, continuous feature-based representations that can handle the complexity of real-world data.

This architectural shift allows AI to resolve ambiguities that have long plagued computer science. Words like 'May' can represent a month, a name, or a modal verb. A neural network doesn't pick one definition immediately; it hedges its bets across multiple layers. As information passes through the transformer architecture, the model uses context from surrounding words to 'disambiguate' the meaning. This process of words 'holding hands'—adjusting their shapes in high-dimensional space to fit together—is what we call understanding. It is a dynamic, structural alignment that is far more sophisticated than the static dictionaries of the past.

Digital Immortality and the Power of Weight Sharing

One of the most profound realizations in modern AI research is the distinction between biological and digital intelligence. Biological brains are examples of mortal computation. Because our knowledge is inextricably tied to the specific physical arrangement of our neurons and their unique analog properties, our 'software' cannot be separated from our 'hardware.' When a human brain dies, the trillions of specifically tuned connection strengths die with it. We cannot simply upload our 'weights' to another person or a computer. This makes the transfer of human knowledge incredibly inefficient, relying on the slow, low-bandwidth process of language and education.