Why AI Videos Still Move Like Nightmares

Generative AI has officially conquered the static image. High-quality video prompts now produce stunningly photorealistic results at negligible costs. Every frame looks like a masterpiece of light and shadow.

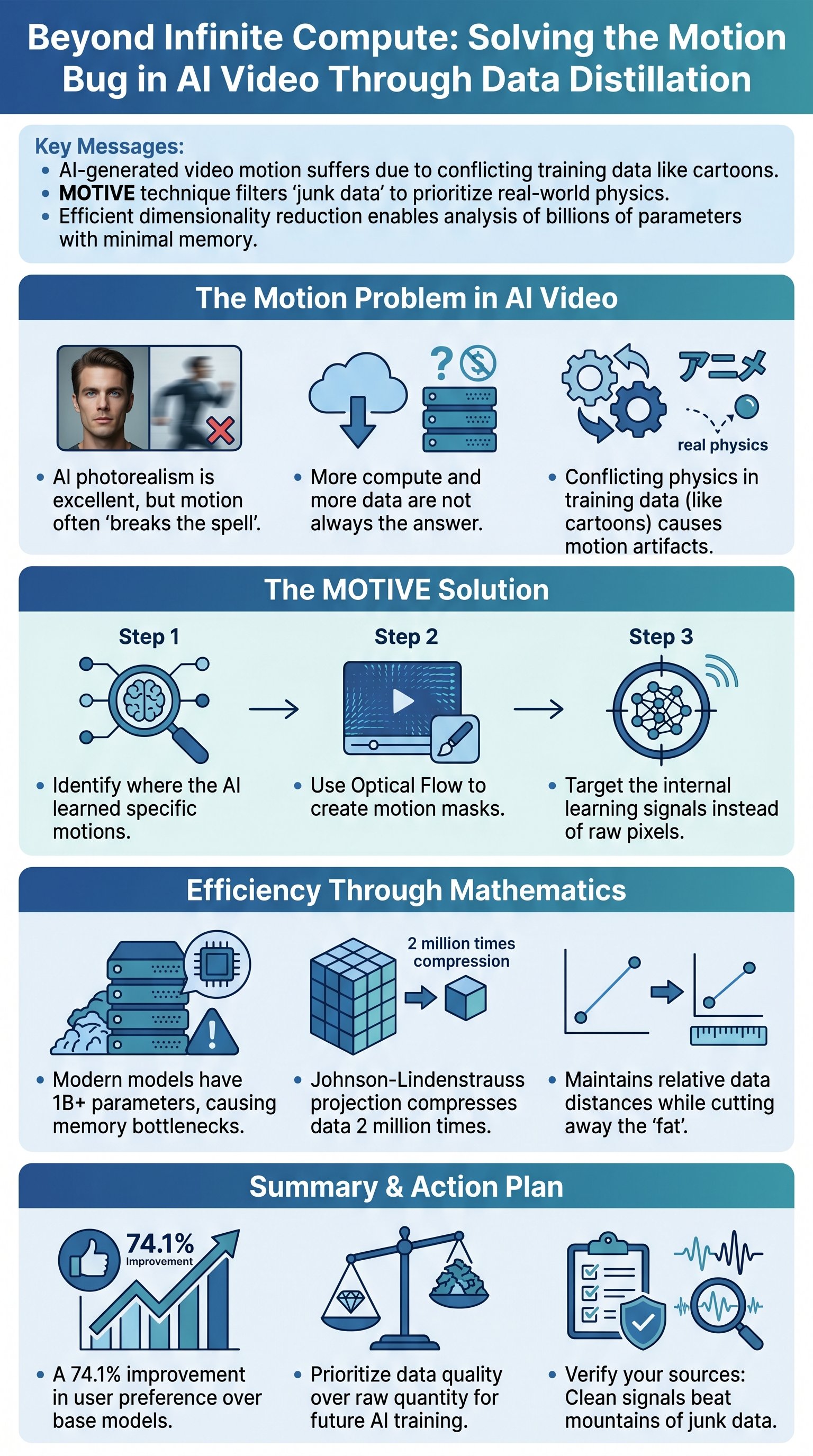

But the illusion breaks the moment things start moving. While photorealism is second to none, the physical logic is fundamentally broken. Objects float, gravity fails, and characters move with a haunting, rubbery quality.

Most researchers scream for more compute and more data to solve this. They believe scale is the only answer to every AI failure. If the motion is bad, they simply throw more billions at the problem.

Motion breaks the spell even when the individual frame is impeccable.

In fact, OpenAI's Sora showed that increasing compute by 32 times yields better results. But compute is a brute-force weapon that ignores the underlying rot. It hides the symptoms without curing the disease of bad physics.

Therefore, we are witnessing a clash between visual perfection and physical illiteracy. The industry is currently obsessed with the wrong metrics. They are chasing pixels when they should be chasing the laws of nature.

The frame looks right while the movement feels wrong. This creates an uncanny valley of motion that no amount of resolution can fix. We need a different approach to make AI understand how the world actually works.

The Toxic Influence of Cartoon Physics

We often assume that more data equals more intelligence. This is a dangerous fallacy in the world of machine learning. Not all data is created equal, and some of it is actively harmful.

Training sets are filled with deeply conflicting information. Cartoons, for instance, teach AI that bodies bounce like rubber and gravity is merely a suggestion. These frames are visually high-quality but physically impossible.

Cartoons teach conflicting information that deforms the model's understanding of reality.

In cartoons, characters pause midair before falling. Bodies snap back into shape instantly after a collision. These "fun" elements are catastrophic for an AI model trying to learn real-world dynamics.

- Characters pausing midair before falling.

- Bodies snapping back into shape instantly.

- Floating objects with no physical inertia.

- Extreme exaggerations of weight and mass.

Therefore, the AI cannot distinguish between a falling anvil and a bouncing ball. It treats conflicting physical data with equal weight. Physics becomes a chaotic mix of slapstick humor and actual science.

This junk data is the primary bottleneck for video generation. It is a virus living inside the neural network's weights. Instead of adding more noise, we must learn to prune the garden. Strategic subtraction is more powerful than mindless addition.

The Surgical Precision of MOTIVE

Researchers have finally developed a technique to interrogate the AI's memory. This method, known as MOTIVE, identifies the bad influences within the dataset. It targets the specific videos that cause physical errors.