The Foundations of Modern Computing: From Mechanical Sheets to Silicon Chips

The history of computing is a fascinating journey that began long before the sleek laptops we use today. In 1936, Conrad Zuse developed the Z1 in his mother's basement, marking the birth of the first programmable computer. This machine was entirely mechanical, utilizing over 20,000 sliding metal sheets to represent binary data. While it could only execute one instruction per second, it established the fundamental logic of Boolean algebra and floating-point numbers that we still rely on. The evolution from mechanical parts to the Von Neumann architecture in 1945 changed everything by defining how data and instructions could share the same memory space, a concept that remains the backbone of modern hardware design.

The real breakthrough occurred with the invention of the transistor, a semiconductor capable of switching or amplifying electrical signals. This allowed the representation of binary '1's and '0's through electrical current rather than physical movement. By 1971, Intel released the first commercial microprocessor, the 4004. This 4-bit processor, containing 2,300 transistors, operated at 740 kilohertz—a far cry from the gigahertz speeds of today but a monumental step forward for integrated circuits. Understanding this lineage is essential for any professional looking to grasp how current processing units like the CPU and GPU actually operate at a physical level.

Key insight: The Von Neumann architecture revolutionized computing by allowing a single processing unit to handle both data and instructions stored in a unified memory space.

Modern silicon chips are essentially billions of microscopic transistors inscribed on a silicon substrate. These transistors act as both conductors and insulators, allowing electrical engineers to create complex logic gates. As software engineers, we harness this electrical magic to build the digital 'illusions' that drive our modern world. Whether it is an operating system or a mobile app, every line of code eventually translates back to the binary language these transistors speak. The transition from mechanical sheets to atomic-scale transistors represents one of the most significant technological leaps in human history.

| Era | Technology | Speed | Innovation |

|---|---|---|---|

| 1936 | Z1 (Conrad Zuse) | 1 Hertz | First programmable mechanical computer |

| 1945 | Von Neumann | N/A | Unified memory and processing design |

| 1971 | Intel 4004 | 740 Kilohertz | First commercial microprocessor |

| Modern | Silicon Chips | 3+ Gigahertz | Billions of transistors on a single die |

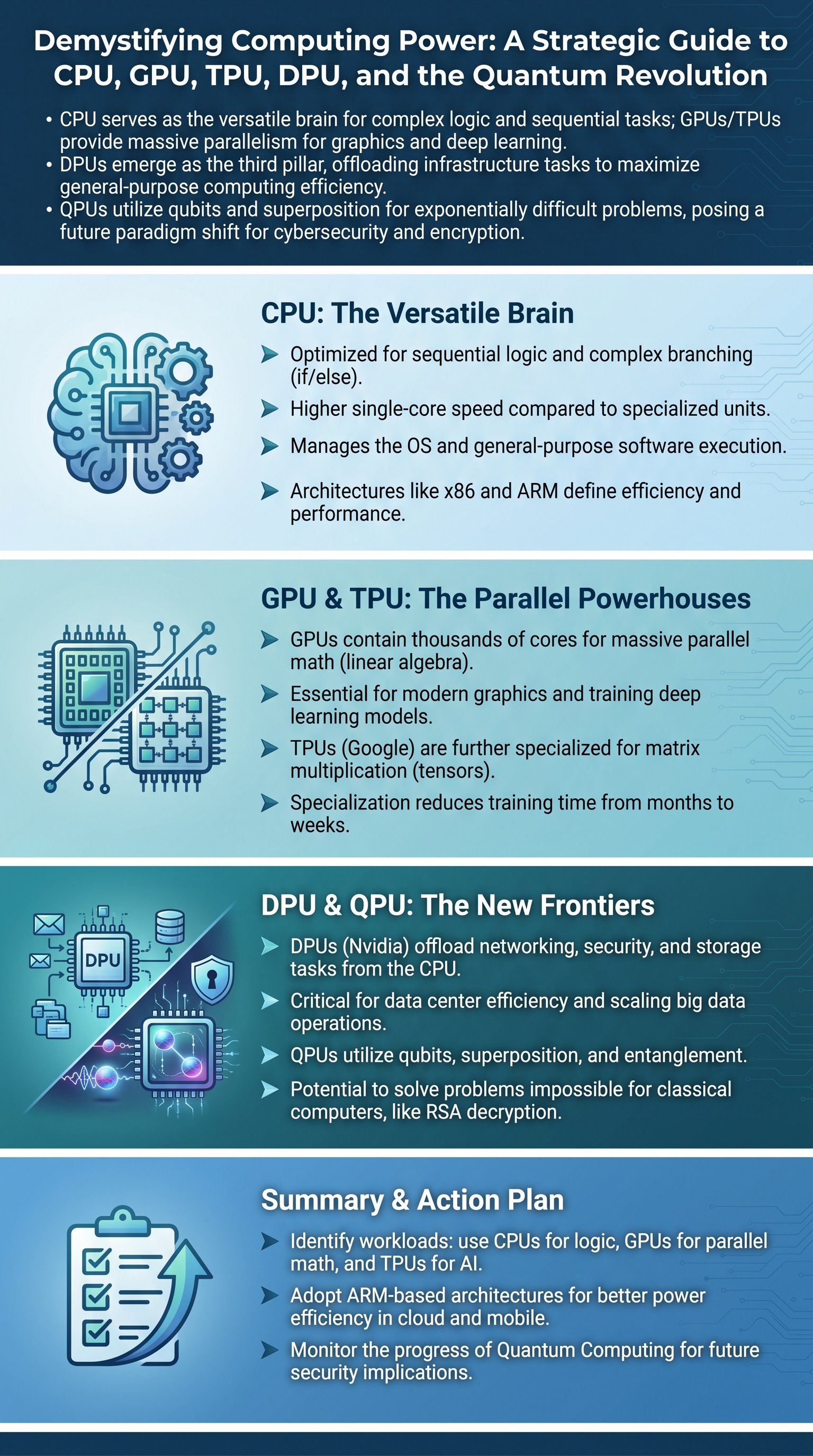

CPU vs. GPU: Balancing Sequential Logic with Massive Parallelism

The Central Processing Unit (CPU) is often described as the brain of the computer, and for good reason. It is designed to handle the operating system, manage hardware, and execute programs that require complex branching logic. A CPU is optimized for sequential computations, meaning it excels at tasks where the next step depends on the outcome of the previous one. Think of navigation algorithms that calculate the shortest route; these involve many 'if-else' conditional statements that must be processed one by one. Modern CPUs like the Apple M2 Ultra or Intel Core i9 have multiple cores to allow for multitasking, but there is a physical limit to how many cores can be added before heat and power consumption outweigh the benefits.

In contrast, the Graphics Processing Unit (GPU) was originally designed for the specialized task of rendering images. While a high-end CPU might have 24 to 128 cores, a modern GPU like the Nvidia RTX 4080 contains nearly 10,000 cores. However, these cores are not as versatile as CPU cores. A GPU core is designed for simple, repetitive mathematical operations, specifically linear algebra and matrix multiplication. This makes them perfect for calculating the behavior of light and shadows in video games, or training massive deep learning models that process millions of data points simultaneously. A CPU is a versatile tool for any task, while a GPU is a specialized powerhouse for parallel math.

Caution: Simply adding more CPU cores leads to diminishing returns due to power consumption and heat dissipation challenges.

Architectures also play a crucial role in performance and efficiency. For decades, the x86-64 architecture dominated the desktop market. However, the ARM architecture has become increasingly popular due to its simplified instruction set and superior power efficiency. This shift is evident in mobile devices and the recent success of Apple Silicon. Cloud providers are also adopting ARM-based chips like the Amazon Graviton 3 to reduce data center power costs. As a developer or business leader, understanding whether your workload requires the high-speed sequential logic of a CPU or the massive parallel throughput of a GPU is critical for optimizing performance and cost.

- CPU Strengths: Complex branching, sequential logic, general-purpose tasks, high single-core speed.

- GPU Strengths: Parallel computing, linear algebra, image rendering, deep learning training.

- Efficiency Shift: ARM architecture is moving from mobile-only to high-performance desktop and cloud environments.

The Rise of Specialized Units: Understanding TPU and DPU

As Artificial Intelligence (AI) and Big Data became dominant forces, the industry realized that even GPUs had limitations. This led to the development of the Tensor Processing Unit (TPU) by Google in 2016. A TPU is specifically engineered for tensor operations, which are the building blocks of deep learning. Unlike a GPU that must constantly access registers or shared memory, a TPU uses 'multiply accumulators' to perform massive matrix multiplications directly in hardware. For organizations training neural networks that would otherwise take months, a TPU can reduce training time to weeks and save millions of dollars in compute costs. It is the pinnacle of specialization for the AI era.